The 10% Problem: AI's Value Gap Is Wider Than Anyone Is Admitting

The most monumental technology of our generation is concentrating its benefits at the top — and may be eroding cognitive capacity everywhere else.

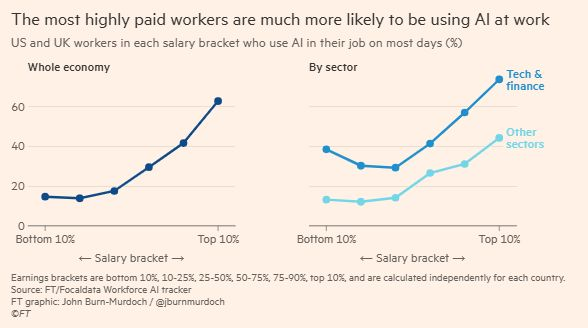

- ● The FT/Focaldata Workforce AI Tracker shows 62% of top-decile earners use AI daily at work — versus 13% at the bottom decile. That's not adoption lag. That's a structural gap.

- ● The scaffolding that lets AI compound — peer visibility, psychological safety, domain expertise, metacognitive skill — clusters at the top. The tools have reached everyone. The conditions haven't.

- ● Research from Nature, Anthropic, and David Brooks on Prof G suggests the 80% using AI as a thinking replacement may be losing cognitive capacity, not gaining productivity.

- ● Product builders have more leverage over this gradient than any policymaker. Who you design for, what friction you keep, and what you measure determines which side of the gap your product accelerates.

The Financial Times just published the cleanest picture I've seen of where AI is actually landing. John Burn-Murdoch plotted it using the FT/Focaldata Workforce AI Tracker: the share of US and UK workers who use AI on most days at work, broken out by salary bracket. At the bottom 10% of earners, about 13% use AI daily. At the top 10%, it's 62%. In tech and finance, the top decile climbs past 70%. In every other sector, it barely scrapes 48%.

That's not a rollout curve. That's a caste line.

I want to be careful here, because I know how it sounds. Product people are trained to read charts like these as adoption lag — a gap that closes as tools get better and cheaper. But that's the wrong read. AI has been "better and cheaper" for two years. ChatGPT is free. Copilot ships inside every Office license. Claude runs on your phone. The tools have already reached everyone. The value hasn't.

This is the piece I've been avoiding writing. I'll tell you why near the end.

AI value isn't spreading. It's stacking.

Strip the FT chart of its sector breakdown and the shape is brutal: a fivefold gap inside a single economy, for a tool that costs less than a streaming subscription.

Layer in what the research has been saying all year:

- 84% of the world has never used AI. Only 0.3% pay for it. The "AI is everywhere" narrative is a story product leaders tell themselves inside San Francisco and London. For most of the planet, it's still something other people do.

- 80% of ChatGPT users sent fewer than 1,000 messages in all of 2025, per Benedict Evans's analysis. Even the market leader hasn't solved the engagement problem — and this is among people who already signed up.

- METR's RCT on experienced developers found zero measurable productivity gain. They felt faster. The output didn't move.

- Microsoft Copilot plateaued at 30% weekly active usage after six months — inside enterprises with full licenses and mandatory rollouts, per The Information. The other 70% stopped opening it.

- BCG found employee-centric organizations are 7x more likely to be AI mature. Not 7% more likely. Seven times. The technology doesn't predict the outcome. The culture around it does.

- HBR confirmed peer influence is the single most powerful predictor of AI adoption. When learning stays private, adoption stalls. It compounds only where people see their peers using AI visibly and successfully.

Read those back-to-back and it's obvious. To get real value from AI right now, you need a stack of conditions most workers don't have: peers who are visibly succeeding with it, a culture safe enough to experiment, domain expertise deep enough to verify outputs, metacognitive skill sharp enough to articulate what you're trying to do, and time carved out to practice. Those conditions don't appear randomly. They concentrate in the same places the FT chart highlighted: tech, finance, top decile, big-city offices with in-house AI councils already moving faster than their training decks.

The bottom 80% of the workforce isn't locked out because the tools are too expensive. They're locked out because the scaffolding around the tools is invisible if you're not already inside it.

This has direct product implications. If you're shipping an AI feature today, your daily active users look disproportionately like the top-right corner of Burn-Murdoch's chart. That's not your total addressable market. That's your current addressable market — the slice that already has the conditions for your product to land. Every dashboard you look at is biased toward them. Every design decision that optimizes for the power user pulls you further from the people the FT chart says aren't coming.

And for those who do use it — the cognitive bill is coming due.

There's a second body of research that sits uncomfortably next to the inequity data, and most of us in product have been quiet about it.

David Brooks said it most plainly on the Prof G podcast. Talking about how humans will split around AI:

"I think that 20% will use AI to think a lot more, and their mental and cognitive capacity and productivity will be astounding. I think 80% of humans — I'm just guessing — don't like to think. They're what psychologists call cognitive misers. So they'd rather not. They can use AI to substitute for their thinking. And some of the new research that's just come out suggests that the decline in motivation to think among people who use AI is massive. People just do not — not only do they not want to think, they lose the capacity to think hard."

"A caste system where you have 20% who are cognitive superstars and 80% who are cognitive backward — you've got problems."

I don't love all his language. But the underlying claim is backed by research we've covered here:

- "Against Frictionless AI" in Nature (Inzlicht & Bloom): removing struggle from AI workflows destroys the learning that builds expertise.

- Shen & Tamkin RCT: AI users learned 17% less than the control group — without any measurable efficiency gain. They got less sharp and weren't more productive.

- Anthropic's AI Fluency research: polished AI outputs reduce critical evaluation by users. The prettier the output, the less likely you are to challenge it.

- The Nature median-pull study: scientists using AI produce 26% more citations — but their work converges toward a common position. More output. Less originality. The individual gets a raise. The field loses the edges.

- Helen Edwards at the Artificiality Institute frames this as the collapse of cognitive sovereignty: the ability to remain the author of your own thinking. Most AI products collapse the solution space to a single "best" answer. That's a design choice. And it trains the user to stop exploring.

The full shape of the problem: the 10-20% who benefit most are using AI as a thinking amplifier — a partner that expands their range while they stay the author of the work. The 70-80% who are using it at all use it as a thinking replacement — a shortcut that produces an answer and shuts the loop. The first group is getting sharper. The second group is, measurably, getting duller. The people not using it at all are falling further behind whichever direction that group is heading.

A caste system is the wrong metaphor only because it implies stability. What we're actually building is a cognitive gradient that compounds in both directions at once.

What I've been avoiding saying

I've worked with AI tools every day for two years. I co-host a podcast about this. On paper, I'm exactly the worker the FT chart is celebrating — top decile of users, top decile of beneficiaries, the 20% side of Brooks's split.

Here's what none of the research quite captures. The instincts I built over twenty years — the ones that let me read a room, flag a weak argument, tell when a participant is lying to themselves, feel when a deck is off before I can say why — those instincts feel like quicksand when I'm forced to work with a virtual chief of staff that wants me to articulate every part of my brain and life.

Every decision gets interrogated. Every intention gets a follow-up question. Every piece of context I've internalized over decades has to be typed out for a system that holds none of it. I used to make a call in half a second and move on. Now I stop, explain why I made the call, defend it against a counterfactual the model generated, and watch my own confidence erode in real time. The expertise didn't go anywhere. The fluency of using it did.

I used to think this was a me problem. It isn't. It's what happens when a tool built on explicit articulation meets a practitioner whose whole edge is tacit knowledge. Seniority was built out of compressed experience. AI demands decompressed experience. The bill for that decompression is paid in self-trust, and it's higher than anyone is admitting.

So when I read the FT chart, I don't just see an inequality problem. I see workers already on the winning side of the gap absorbing a hidden cost nobody's quoting them. And I see another population — the 80% below them — being sold this technology as the solution to their productivity gap, when the research says it's more likely to accelerate their cognitive decline than their output.

The Comfort Crisis connection

Michael Easter's The Comfort Crisis argues that modern humans are worse off physically because we engineered discomfort out of our lives — temperature regulation, ambulatory effort, hunger, boredom. The body evolved for friction. Remove it and the body breaks in quiet ways: metabolic disease, anxiety, attentional collapse.

The case he makes for the body is now being made, in real time, for the mind. AI is frictionless thinking at scale. The Inzlicht & Bloom paper, the Shen & Tamkin RCT, the motivation-to-think decline Brooks referenced — they're the cognitive version of the epidemiological curve Easter tracked. We already know what happens when a species engineers discomfort out of its physical life.

The product design question that follows: what friction should your AI feature keep — deliberately, explicitly — so the person using it ends up sharper instead of softer? If you're not asking that question about your product, someone will eventually ask it about your users.

So what happens when only 10% win?

What happens in a world where only 10% of workers capture real value from the most consequential technology of our generation?

Medical advances and everyday convenience will spread — they always do, unevenly, on a lag. Drug discovery will accelerate. Radiology will get better. Customer service will get cheaper. Those gains are real. But they're downstream benefits — the technology working for people, without requiring them to work with it. The direct productivity and cognitive gains — the ones that determine who gets promoted, who gets hired, who builds wealth — those are not spreading. And by the research we have, they may never spread organically, because they depend on conditions the bottom 80% can't conjure on their own.

If you build AI products, this is your strategic question:

- Who are you designing for? If your product works best for users who already have peer support, metacognitive training, and domain depth, you're building for the 10%. Know which one you're doing.

- What friction have you kept? If your AI collapses every decision to a single "best" answer, you're optimizing for output and degrading the user. Expanding the solution space does the opposite.

- What are you measuring? Completion rate, token volume, session length reward the replacement pattern. They can't distinguish a user who got sharper from one who got duller. If your eval has no cognitive-outcome dimension, you're flying blind.

- Who is on your adoption path? BCG and HBR data is unambiguous: the decisive variable is peer visibility and psychological safety, not tooling. If your rollout strategy is a training deck, you're building for the 10% who were going to figure it out anyway. The 80% need visibility mechanisms — shared workflows, public experiments, visible failure. That's a product feature, not an HR program.

The larger signal I'm tracking in my research into AI value in enterprise deployments is this: the organizations closing the gap aren't the ones with better tools. They're the ones that treated the scaffolding — the peer conditions, the cultural safety, the deliberate friction — as a product problem, not a change management problem. That shift in framing is where the opportunity lives.

We can build AI that forces thinking instead of replacing it. We can design for the 80%, not the 10%. We can measure whether our users end up sharper. Or we can keep shipping for the top of the chart and call the gap a go-to-market problem.

I know which of those choices compounds. I'm less sure which one we're actually making.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

![5. The Human Impact of AI We Need to Measure [Helen & Dave Edwards]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1774884523188-db0414bd7dc71.jpg)

5. The Human Impact of AI We Need to Measure [Helen & Dave Edwards]

4. The AI Agent Era Will Change How We Work

Related

6

The Free Ride Is Over: AI Economics Is Now Your Most Important Strategy Decision

Stanford's AI Index Proves the US Can't Buy Its Way to an AI Lead

Stanford's 2026 AI Index Just Dropped. Here Are the Numbers Product Leaders Need.

97% of Executives Deployed AI Agents. Only 29% See ROI. The Gap Is the Story of 2026.

Physical AI: What It Is, What's Been Built, and Five Startups That Will Define It