Context Models Are the Unlock to Consistent AI Value

Three years of treating AI like a smarter Google. MCP and knowledge graphs are about to change what AI can actually do — engineers cracked it first, knowledge workers are next.

- ● Engineering teams figured this out before anyone else, because their work was the easiest to instrument.

- ● Engineering work is structured.

- ● A knowledge graph maps structured entities — people, projects, decisions, assets, deadlines — and the relationships between them.

- ● If you’re a market researcher, product manager, strategy consultant, or customer success leader — what does this look like in practice?.

- ● Working with a context-rich AI system is not like prompting a chatbot.

For three years, every executive I’ve worked with has had the same complaint about AI: we can’t trust it for anything that matters. The demos are stunning. The pilots show promise. Then production hits — hallucinated facts, missing context, generic output that ignores everything specific about the business. So organizations adapted. They use AI like a smarter Google. Ask a question, get an answer, paste it somewhere, move on.

The data backs this up. KPMG’s 2025 global trust study of 48,000 people across 47 countries found 66% use AI regularly but only 46% trust it. People are getting less trusting as adoption rises. McKinsey’s 2026 trust survey shows enterprise maturity creeping from 2.0 to 2.3 on a five-point scale. Only one-third of organizations rate themselves above 3 in strategy or governance. Sentiment has been collapsing while spend has been climbing.

The reason isn’t model capability. We’ve been deploying these systems like search engines instead of like colleagues. A colleague who knows your business, has access to your repositories, and has read your past reports is useful. A colleague with no context is an expensive intern who keeps asking you the same questions.

That gap is closing right now, and it’s going to change knowledge work in a way the last three years did not.

Engineers Cracked the Context Problem First

Engineering teams figured this out before anyone else, because their work was the easiest to instrument. Code lives in version control. Tests live in repositories. Specifications live in tickets. The whole apparatus of professional software development is already structured data, just waiting to be fed to a model.

The numbers are stark. GitHub’s own telemetry shows Copilot generates 46% of all code in repos where it’s installed — 61% in Java. Across the broader developer population, 22% of merged code is AI-authored. By the end of 2025, 85% of professional developers used AI tools regularly, 51% daily.

The dominant working pattern is now Cursor for editing plus Claude Code for complex multi-file work, with 70% of engineers using 2-4 AI coding tools simultaneously. Many leading teams now ship most of their code through AI agents that orchestrate across the codebase, with the engineer acting as reviewer and refiner rather than typist.

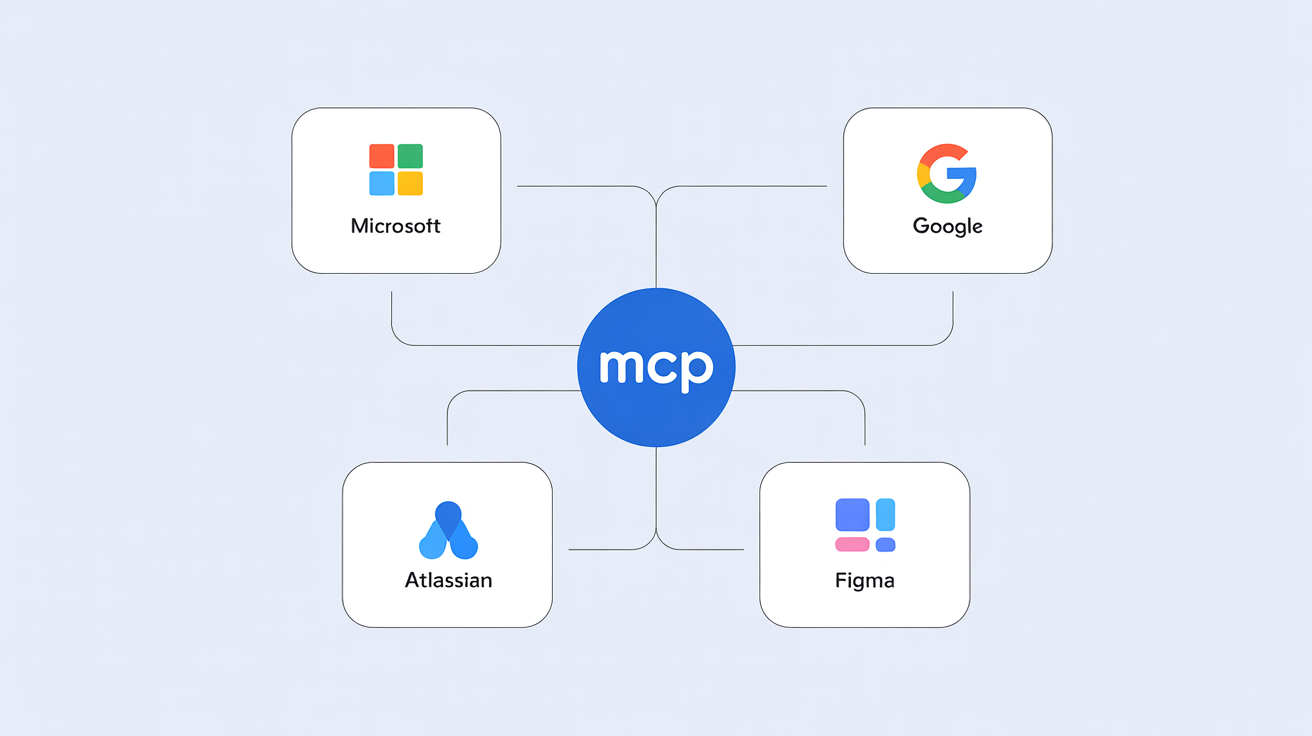

Better models alone didn’t get us here. The unlock was Anthropic’s Model Context Protocol — MCP — released in November 2024. OpenAI added MCP support across its Agents SDK in March 2025. Google DeepMind followed in April. There are now over 10,000 active MCP servers and 97 million monthly SDK downloads across Python and TypeScript. In December 2025, Anthropic donated MCP to the Linux Foundation as a vendor-neutral standard.

What MCP actually does is simple to describe and revolutionary in practice. It lets any AI assistant connect to any data source through a single open protocol. Before MCP, every integration was a custom build. Now an agent can read your GitHub history, query your Jira, search your documents, and run your tests through a uniform interface the model can use without bespoke glue code.

This is what makes Claude Code, Cursor, and the broader agentic coding ecosystem work. They give the model the full repository, the test suite, the recent commits, the open issues, the team’s coding conventions. Output quality jumped because the model finally had the context a senior engineer would have.

Why Knowledge Workers Haven’t Scaled — Until Now

Engineering work is structured. Knowledge work is not.

A market research synthesis depends on judgment about which prior work matters. A customer success report draws on twelve months of conversation history scattered across email, Slack, Notion, and someone’s brain. A strategic recommendation pulls from past decks, audit findings, and unwritten lore about which clients hate which vendors.

This is why upskilling tracks have stalled in non-technical functions. McKinsey reports 77% of organizations plan to upskill workers, but only 1% describe themselves as mature on AI deployment. The gap isn’t training material. Knowledge workers have nothing structured to give the model. They paste a brief into ChatGPT, get a generic response, and conclude AI doesn’t understand their business. They’re right. It doesn’t.

Engineers got context infrastructure first because their context was already in machine-readable form. Knowledge workers’ context has been scattered across half a dozen SaaS tools, none of which talked to each other.

That’s about to change.

2026 Is the Inflection Point. Atlassian Just Made It Real.

A knowledge graph maps structured entities — people, projects, decisions, assets, deadlines — and the relationships between them. Standard RAG retrieves text snippets that match your query by semantic similarity. A graph lets the model reason across connections: who owns this project, what decisions shaped this design, which dependencies are downstream of this change. That reasoning requires traversal over structured relationships, not pattern-matching on words.

The cross-platform potential is where this gets powerful. Connect Figma to your project system and the model can trace the gap between a design decision and the ticket it spawned — without you explaining the history. Add Google Workspace — Docs, Sheets, Meet transcripts, email threads — and it knows what was agreed, what changed, where the disagreement landed. Layer in Jira, Confluence, and Bitbucket and you have the full arc from brief to shipped code, structured and queryable.

At Team ‘26 in May, Atlassian announced general availability of Teamwork Graph — the first large-scale production deployment of this model for the knowledge work market. Built on Jira, Confluence, Bitbucket, and 50+ third-party connectors including SharePoint, Slack, and Salesforce, the graph contains over 150 billion connections between people, work, goals, code, and content. It’s MCP-exposed, which means any AI assistant that speaks the protocol can read from it.

The performance numbers are the part of the announcement worth real attention. Grounding AI responses in Teamwork Graph data delivered 44% more accurate results while using 48% fewer tokens.

Token cost is the central enterprise pain point right now. The FinOps Foundation’s 2026 report identifies AI and data platforms as the fastest-growing new category of enterprise spend. Average enterprise AI budgets jumped from $1.2M in 2024 to $7M in 2026, with inference accounting for 85% of that. Token prices fell 99.7% in a year, but enterprise AI cloud expenditure tripled — because consumption volumes exploded. The 44%/48% Atlassian finding is the economics fix: richer context produces better answers in fewer tokens.

This is what Juan Sequeda has been arguing for two decades — and what we covered in S02E03 of the Product Impact Podcast. Juan’s blunt take on prompt engineering as a substitute for structured knowledge: “Hope is an interesting strategy. It’s not one I add to my strategy.”

Atlassian is the first major work platform to ship this for the broad market. Microsoft, ServiceNow, Google, and Notion are building in the same direction. The graph layer is becoming infrastructure — and MCP is making it composable across every vendor in the stack.

What This Looks Like For Knowledge Workers

If you’re a market researcher, product manager, strategy consultant, or customer success leader — what does this look like in practice?

Concretely:

- A market research synthesis drawing on every report your firm has written in the last three years, weighted by relevance to the current question.

- A customer account review pulling account history, support tickets, NPS responses, and meeting transcripts to surface patterns no individual analyst would have seen.

- A board memo that knows what every prior board memo argued, what was contested, and what the actual outcomes were.

- A pitch deck that pulls accurate, current numbers from your CRM, your finance system, and your product analytics — without you flipping between four browser tabs.

This is the engineer experience translated. “Give the model my whole repo” becomes “give the model my whole reports folder, our entire customer history, every meeting transcript, our voice and tone guide.”

Can knowledge workers capture the same productivity gains engineers did? My honest read: yes, but the path is harder. Engineering has clear evaluation — the test passes or it doesn’t. Knowledge work is judgment-graded; a bad analysis isn’t obviously bad until it leads to a bad decision. The leverage is real, but the discipline required to use it well is higher.

My own experience confirms the direction. I’ve built a complex repository spanning GitHub, Claude Code, and a fleet of agents, and the more context the system has — templates, examples, prior work, structured connectors — the better the outputs and the lower the token cost. Atlassian’s 44%/48% numbers describe exactly what I see in practice.

The Mindset Shift Is Harder Than the Tooling

Working with a context-rich AI system is not like prompting a chatbot. It’s like running a team of capable juniors. You don’t tell the AI what to do — you tell it what you’re trying to accomplish, what good looks like, and where the inputs live. Then you review the first draft and refine.

What this means in practice:

- You become an orchestrator. Your value shifts from execution to defining intent, structuring inputs, and reviewing outputs. The work happens in parallel, often without you watching it.

- You write the brief, not the doc. A clear specification produces a usable first draft. A vague brief produces garbage you have to redo. The discipline of being clear up front is the discipline of getting AI leverage at all.

- You become a reviewer and refiner. The model writes the first version. Your job is to know what’s missing, what’s wrong, and what the next iteration needs.

- You set quality bars explicitly. What does “good” look like? Which past work is the benchmark? Which tone is appropriate? These were once implicit in your individual practice. Now they have to be encoded so the system can use them.

If that sounds intimidating, the reframe is straightforward. It’s the same discipline you’d use to onboard a junior employee. Explain the work. Show examples. Set expectations. Review their first attempts. Give feedback. Iterate.

The difference is that with a knowledge graph behind it, the AI version of that junior can work in parallel, around the clock, on every workstream simultaneously. The leverage isn’t 1.5x. It’s exponential.

What To Do Right Now

Most organizations are still buying AI as a point solution, still measuring success by chat usage, still treating prompt engineering as the central skill. The companies that figure out the context layer first will compound. The ones that don’t will look back at 2026 the way late mainframe holdouts looked back at 1985 — same access to the technology, used wrong, gap closed against them.

If you lead a team:

- Start building the graph. Connect your systems. Document the templates. Capture the examples. Make your work legible to a model that’s about to work alongside you.

- Decide what counts as “good” in your function — and write it down. Voice guides, evaluation rubrics, exemplar artifacts. These are the seed of every quality improvement you’ll see from AI in the next 18 months.

- Audit your token spend against grounded vs. ungrounded usage. The Atlassian numbers suggest grounding can cut token cost in half. That’s a budget recovery hiding in plain sight.

If you’re an individual contributor:

- Practice the orchestrator discipline now. Write clearer briefs. Treat your prompts like job specs you’d give a new hire.

- Build a personal context repository. Your past work, your style, your reference materials, the patterns you reuse. This is the moat between you and someone using ChatGPT raw.

- Choose where you land on the spread. Microsoft’s 2026 Work Trend Index puts 16% of users at “Frontier Professional” — orchestrating agents at scale. The other 84% are still treating AI as a chatbot. The gap between those two groups is going to widen sharply over the next year.

Context models are the unlock to consistent AI value. The infrastructure is here. The question is whether your customers and teams are put into a position to succeed. This shift is monumental and requires much more than proving the capability of the model — it requires understanding how people fundamentally want to work.

Intent Is the Final Boss of AI Agents

Context will close most of the gap between AI demos and AI value, but it cannot close all of it. The missing piece is intent: the judgement calls, the decision-making protocols, the implicit weighting of which evidence matters and which stakeholder gets the final say. Those rarely show up in artifacts. They live in the person who made the deliverable, not in the deliverable itself. You can hand an agent the template, the data, the prior work, and the brand voice and still get a structurally correct, substantively wrong result.

Intent is the difference between an agent that copies the shape of past work and one that masters the agency and expertise behind it. There is no cheap unlock for that gap. Either you spend on inference, running the model longer with more reasoning until it triangulates intent from edge cases — which gets expensive fast — or you keep exceptional people in the orchestration seat, encoding intent in real time as the work unfolds. Most organizations will discover this in production, after committing the budget, the same way they discovered the token-cost problem.

Are You Concerned About Managing AI Agents?

If you’re inside an organization trying to figure out how to actually staff, train, and govern this transition, you’re not alone. Most leaders I talk to are working through the same awkward shift from doer to orchestrator, with skills unevenly distributed and the vendors selling tooling having no commercial incentive to slow down and teach the muscle.

We’re developing content and training to support that transition — practical material on writing briefs that produce usable drafts, structuring context so agents reach for the right inputs, and encoding intent so quality does not collapse the moment the human steps out of the loop. If that is the work in front of you, reach out via PH1 or reply through whichever channel surfaced this article. I want to hear what you’re running into.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

The Agentic Era: What AI Agents Are, How They Change Work, and Why 94% of Organizations Aren't Ready

What UX Research Looks Like When Context Becomes the Engine

Team Work Is About to Transform and Atlassian Is Leading the Charge

Every CEO Will Post a Layoff Notice Like This. Here Is Why.

The Cognitive Shift Every UX Researcher Needs to Make

Meta Is the Cautionary Tale About AI Every Founder Needs to Remember

Product Impact Newsletter

AI product strategy delivered weekly. Free.