Every CEO Will Post a Layoff Notice Like This. Here Is Why.

Brian Armstrong's all-employee memo is being read as a headcount story. It is the operationalization of an ideology that has been forming in Silicon Valley since 2023 — and the same ideology will reach every executive team, on a timeline set by competitive pressure, investor expectation, and the structure of AI investment already committed.

- ● On May 5, 2026, Coinbase CEO Brian Armstrong sent an all-employee email announcing a 14% reduction in headcount.

- ● Each of Armstrong’s principles maps to a distinct strand of thought that has been gathering force in Silicon Valley since 2023.

- ● Across the public commentary from this group of founders, executives, and investors, a shared diagnosis has emerged about what is actually broken at the AI inflection point.

- ● The ideology described above does not, by itself, force restructurings.

- ● The Armstrong letter is not the first of its kind.

Brian Armstrong’s May 5, 2026 all-employee memo has been covered as a headcount story. The 14% reduction is the number that traveled; the argument inside the same letter is what matters, because that argument — its three structural principles, its specific intellectual lineage, its diagnosis of what organizational layers cost in an AI-native context — is the clearest public statement any major technology CEO has made about how Silicon Valley intends to redesign the relationship between companies and the people working inside them.

This letter is going to be used as a template. Not because Armstrong invented these ideas — he didn’t — but because he put them into operational language, signed his name to them, and attached them to a company announcement in a way that gives every board member, investor, and executive team in Silicon Valley a legible precedent. The ideology it contains has been forming for three years. The competitive and capital pressures driving it have been building for two. The next 100,000 people who receive a layoff notice in tech will receive it because of the beliefs laid out in this letter, whether their CEO has read it or not.

For team leaders, the analysis below maps what is coming and why. For the people working inside these organizations, it is a warning. The metrics that determined job security in the last decade of technology careers — hours contributed, features shipped, commits logged, coverage sustained — are not the metrics the organizational model this letter describes is built on. The companies moving to that model are not calculating headcount against output. They are calculating leverage: the ratio between human judgment applied and organizational impact produced. The employees who survive and advance in this transition will be the ones who have repositioned themselves on that axis.

What Armstrong Actually Said

On May 5, 2026, Coinbase CEO Brian Armstrong sent an all-employee email announcing a 14% reduction in headcount.

The headcount number is the news cycle. The argument inside the same letter is the story. Armstrong outlined three structural principles for how Coinbase will operate from this point forward, and each one points to a specific belief about what makes an organization either compete or fall behind in 2026.

Principle one: a hard cap of five management layers below CEO and COO. Armstrong’s reasoning, in his own language: “Layers slow things down and create coordination tax.” He is naming hierarchy itself as a cost, not a feature. The argument is that depth of organizational structure correlates negatively with execution speed, and that the speed differential matters more now than at any point in the company’s history.

Principle two: the elimination of pure management roles. “Every leader at Coinbase must also be a strong and active individual contributor. Managers should be like player-coaches, getting their hands dirty alongside their teams.” Armstrong is rejecting management as a stand-alone function. In his framing, leadership without contribution is overhead.

Principle three: AI-native pods. “We’ll be concentrating around AI-native talent who can manage fleets of agents to drive outsized impact,” including, in Armstrong’s language, “one person teams” spanning engineering, design, and product management in a single role.

The framing that ties them together: “rebuilding Coinbase as an intelligence, with humans around the edge aligning it.”

The Armstrong letter announces a redesign of how Coinbase will operate, not only a reduction in its size: AI as the substrate of execution, minimal hierarchy, human judgment concentrated in fewer, higher-leverage roles. To understand why this letter will be repeated in some form by nearly every Silicon Valley CEO over the next 24 months, you have to understand the ideology Armstrong is operationalizing — and why the people who write these letters believe they have no real alternative.

The Three Pillars of the AI-Native Org Doctrine

Each of Armstrong’s principles maps to a distinct strand of thought that has been gathering force in Silicon Valley since 2023. None of these strands is new in 2026. What is new is that the AI capability curve has caught up to the ideology, making operationalization possible at companies of meaningful scale.

Pillar 1: Coordination Tax — The Anti-Management Argument

The intellectual lineage of “layers slow things down” runs through Reed Hastings’s Netflix-built doctrine of talent density (codified in No Rules Rules, 2020), Patrick Collison’s deliberate flatness at Stripe, and a decade of Silicon Valley discourse against bureaucratic accumulation. The core claim: every layer of management exists to coordinate work, and coordination scales worse than execution. Past a certain headcount, the marginal manager spends more time aligning the organization than producing output, and the organization spends more time aligning itself than serving the customer.

Marc Andreessen articulated the philosophical version in his October 2023 “Techno-Optimist Manifesto,” writing that “we believe central planning is a doom loop and decentralized markets are a form of intelligence.” The application to organizational design has been articulated repeatedly in the venture and operator commentary since: a company is itself a planning system, and the more layers it has, the closer it sits to centralized planning, with the same dysfunctions.

What AI changes is the calculation. Coordination layers existed because human work required hand-offs, and hand-offs required management. If AI absorbs a meaningful share of execution and the hand-offs become machine-readable, the rationale for human coordination layers weakens at every level simultaneously. Armstrong’s five-layer cap is the operational expression of this view: hierarchy is a cost we are willing to pay only up to a defined limit, because the marginal value of additional layers in an AI-native context is, in this framework, negative.

Pillar 2: Founder Mode — The Player-Coach Argument

Armstrong’s “no pure managers” principle traces directly to Paul Graham’s September 2024 essay “Founder Mode,” which argued that founders should not transition to a “manager mode” of leadership at scale because the loss of context and direct involvement degrades the company. Graham was reporting on a talk by Brian Chesky describing how he had returned Airbnb to founder-mode operation by removing layers and re-engaging directly with the work.

The essay landed inside an existing framework. Ben Horowitz had written for years (The Hard Thing About Hard Things, 2014, and subsequent commentary) about the difference between functional and managerial CEOs, arguing that the most effective leaders remained directly engaged with the substance of the work. The Stripe and Anthropic operating models, both well-documented in operator commentary through late 2025 and 2026, run on a similar premise: the most senior people in the company should be visible doing the work, not just directing it.

Armstrong’s “player-coach” formulation is a structural commitment, not a folksy metaphor. A manager whose value is purely directive, who does not also contribute output, has no defensible role in the organizational design. The implication for the existing management class is direct. People who were promoted into pure-management roles, often as a recognition of seniority or performance in a previous individual-contributor role, sit at the center of the principle being eliminated.

Pillar 3: AI as Workforce — The Leverage Argument

The “AI-native pods managing fleets of agents” language is the operational version of an argument Sam Altman articulated in his September 2024 essay “The Intelligence Age.” Altman’s claim: AI provides “abundance” of cognitive labor, and one person with AI access can produce what teams previously required. Aaron Levie has been writing publicly throughout late 2025 and 2026 about the implications for org design — that AI is not a productivity tool added to existing structures but a new substrate that requires the structures themselves to change. In Levie’s framing, the role of the individual contributor is starting to resemble that of a manager: someone who allocates tasks, coordinates agents, and exercises judgment rather than executes directly.

Nvidia CEO Jensen Huang has made the same argument from the infrastructure perspective. In March 2026, Huang projected that Nvidia would eventually run 7.5 million AI agents alongside 75,000 human employees — 100 agents for every person. “AI agents will be in every single part of every single company,” he said, positioning AI not as a tool but as the new operating layer: the company organized around fleets of agents has structurally lower coordination costs and structurally higher execution capacity than the company organized around human roles with AI tools attached.

The “one-person team” Armstrong describes — a single person spanning engineering, design, and product management with agent fleets — is not aspirational. It is the operating unit being deployed inside AI-native startups now, and Sequoia Capital’s 2026 analysis found that AI-native startups ship three times faster than traditional companies with 60% fewer engineers. The economic gap is real, and the gap is what the pods are an attempt to close.

What These Founders Believe Is Slowing Their Companies Down

Across the public commentary from this group of founders, executives, and investors, a shared diagnosis has emerged about what is actually broken at the AI inflection point. The diagnosis has four components, and each one connects directly to a structural decision being made in letters like Armstrong’s.

Process accumulation. Companies that grew through the 2010s and early 2020s accumulated processes — reviews, approvals, sign-offs, status reports — that were rational individually and crippling in aggregate. AI does not respect process. An agent can produce output that requires human review, but the review chain that exists at most companies was designed for a much smaller volume of human-produced work. AI-augmented teams either drown in their own throughput or strip the review structure, and stripping the review structure typically means stripping the people who staff it. This is the unspoken half of “coordination tax.”

Specialization without integration. Functional silos — engineering, design, product, marketing, sales, finance — were efficient when each function had clear, slow-moving outputs. AI value tends to live across silos, in the gaps between how data moves and how decisions get made. Founders are increasingly arguing that the unit of operation should be cross-functional pods, not functional teams, and that the org structure should reward integration rather than specialization. The “one-person team” Armstrong describes is the limit case: integration so extreme that the entire pod is one person plus agents.

Pure managers as overhead. The cleanest expression of this concern lives in the Founder Mode tradition. Managers whose function is purely directive, who do not also produce output, are visible only as cost in an AI-augmented operating model. Their role was valuable in the era when management itself was a scarce skill and human coordination was the bottleneck. In an environment where coordination is structurally cheaper (because AI handles a meaningful share of it) and execution is the bottleneck, the calculation flips, and the person whose only function was coordination becomes the role with the weakest defense.

The wrong skill distribution. The most uncomfortable component of the diagnosis is that the existing workforce, even where it is well-intentioned and capable in its current roles, is not optimized for AI-native operations. Brad Gerstner of Altimeter Capital has argued throughout late 2025 and 2026 that the new performance metric for technology companies is revenue per employee, and that traditional companies are carrying substantial headcount that AI-native peers do not need. The implication being made explicit by this group is that the restructuring is not eliminating people who failed at their jobs. It is eliminating the demand for the jobs themselves, with the people attached.

This last component is what makes the current wave feel different from previous tech downcycles. The 2022-2023 layoffs were largely framed as right-sizing after over-hiring. The 2025-2026 wave is framed as redesigning around a new operating model. The first kind of layoff implies eventual rehiring as the cycle turns. The second kind does not.

Why This Becomes Inevitable in Silicon Valley

The ideology described above does not, by itself, force restructurings. What forces them is the combination of ideology with three structural pressures specific to Silicon Valley.

The first is the investor-driven metric shift. Public markets and venture capital have, through late 2025 and into 2026, increasingly oriented around revenue per employee as a primary efficiency metric. Brad Gerstner’s commentary from Altimeter, Bill Gurley’s blog and podcast appearances, and the broader investor discourse have aligned on the position that AI-native companies should be running at materially higher revenue-per-employee than traditional peers, and that valuations should reflect the gap. A CEO who maintains traditional headcount in a portfolio where AI-native peers are running at three to five times revenue-per-employee will face a valuation discount whether or not the underlying business performance is comparable. Restructuring becomes the path to the multiple, not just the path to profitability.

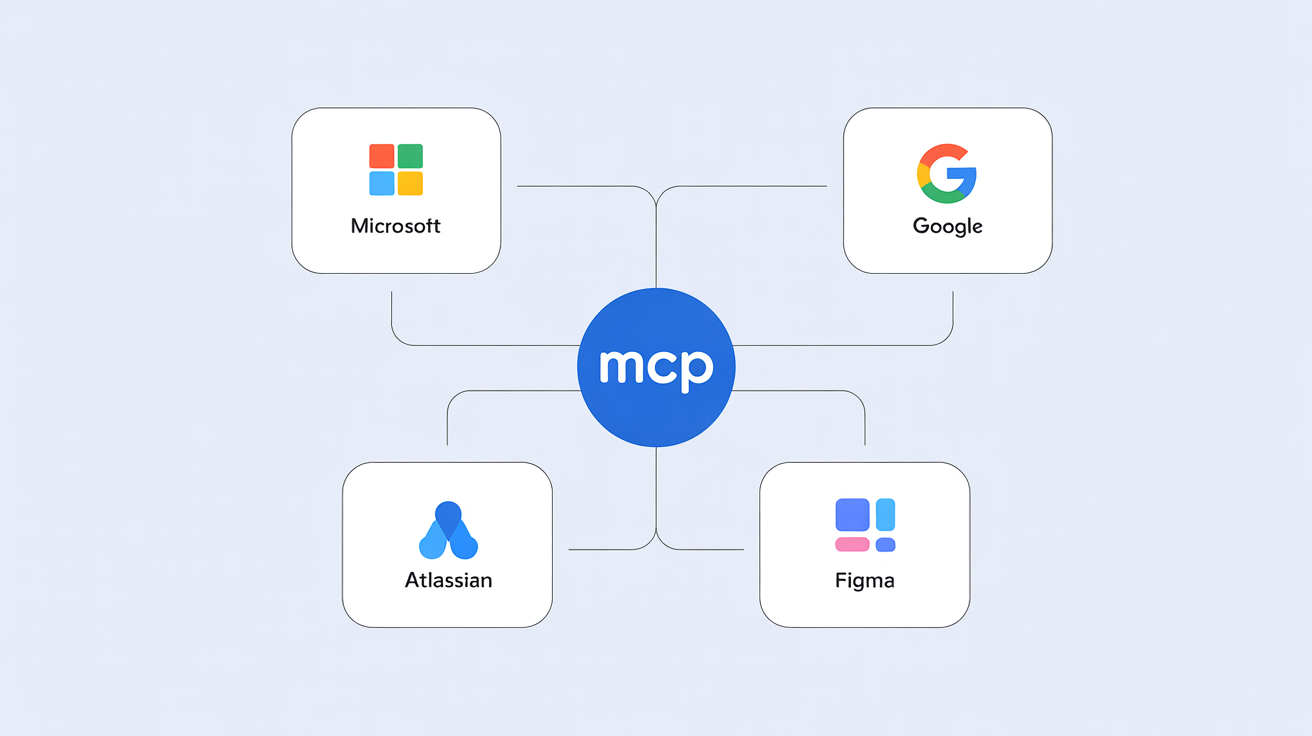

The second is the AI capex justification cycle. Frontier model providers — Microsoft, Google, Meta, Amazon, Anthropic — have collectively committed several hundred billion dollars to AI infrastructure investments. Those investments require enterprise customers to demonstrate productivity returns at a scale that justifies the model spend. Model spend is justified by enterprise adoption. Enterprise adoption is justified by productivity gains. Productivity gains are demonstrated, in the cleanest version of the proof, by reducing headcount while maintaining or growing output. The investment thesis for the frontier model market structurally requires the enterprise customer base to restructure, and pressure on each layer of the thesis flows downstream to the operating company. A CEO whose AI line keeps growing while the headcount line stays flat is going to face board questions that have a defined right answer.

The third is talent flight. Through late 2025 and 2026, the strongest engineers, designers, and operators have been migrating toward AI-native organizations — both startups and the AI-restructured incumbents — at compensation premiums that traditional companies cannot easily match. Companies that delay restructuring face slow-moving talent erosion at the same time they face compensation pressure, and the math forces a choice: restructure to free up budget for AI-native compensation, or watch the talent base degrade while the cost base stays inflated.

These three pressures compound. Investor pressure makes the metric mandatory, AI capex pressure makes the savings non-negotiable, and talent migration makes the timing urgent. None of them are pressures any individual CEO can deflect, and all of them point in the same direction.

There is also a personal dimension to this urgency that does not show up in the public commentary. An analyst for a major firm told me today that C-suite executives are under so much pressure to deliver themselves that getting the pain out of the way early gives them a better chance of personal survival. The CEOs writing these letters are not only responding to structural pressures on their companies; they are responding to performance pressure on themselves, on a clock that runs faster than the company-level reckoning.

The Progression Through Late 2025 and 2026

The Armstrong letter is not the first of its kind. It joins a sequence that has been building through late 2025 and 2026, and the cumulative shape of that sequence is what gives the May 5 letter its meaning.

📸 [PASTE SCREENSHOT: Late 2025 / 2026 layoff news — Coinbase, ServiceNow automation reports, or aggregate layoff tracker]

ServiceNow reported in early 2026 that 90% of internal L1 support cases were now resolved by AI agents, and the company has been progressively reshaping its support organization around that capacity. Intercom’s Fin agent now participates in 90% of customer conversations and autonomously resolves the majority of them — and the unit economics of maintaining traditional support headcount alongside that level of automation have become difficult to defend in any operating committee that is paying attention. Klarna’s well-publicized 2024 announcement that AI had absorbed work equivalent to 700 customer service agents was followed by partial walk-backs in 2025 as quality issues surfaced. The company continued through IPO preparation to position itself as AI-first, and the long-term direction of its headcount has remained consistent with the original announcement even as specific claims have been moderated.

That quality gap is the constraint that makes organizational redesign necessary in the first place. On May 5, 2026, Mark Cuban posted: “The biggest challenge for Enterprise AI, and AI in general, as of now, is that it’s still impossible to make sure that everyone gets the same answer to the same question, every time. AI doesn’t know the consequences of its output. Judgement and the ability to challenge AI output is becoming increasingly necessary, and valuable.” Cuban’s observation holds even inside the companies moving fastest: AI has not yet delivered the consistency infrastructure that would allow organizations to leave agents running autonomously across all functions. The value is being built by redesigning the organization itself — not deploying AI into existing structures, but constructing a new operating system with AI at its core and human judgment governing the edges.

Aggregate tech layoff trackers through late 2025 and into 2026 show a sustained pace of headcount reductions, with AI-related justifications appearing in announcement language with increasing frequency. The framing has shifted. In 2022 and 2023, layoff announcements cited macro conditions and over-hiring. In late 2025 and 2026, they cite operating model change, agent deployment, and repositioning around AI-native workflows. The April 2026 announcements from Meta and Microsoft, totaling 20,000 positions, marked the first time the scale of AI-attributed cuts drew mainstream labor-crisis framing. The executives writing these letters now believe, and are publicly declaring, that the workforce being reduced is not coming back in its previous form.

The first wave is happening in crypto, fintech, and consumer technology — companies with high exposure to AI-native competitors, aggressive AI investment as a share of operating cost, and boards directly applying AI productivity benchmarks to executive performance evaluations. Armstrong’s May 2026 letter is the leading edge of this wave, not its peak. The second wave, six to twelve months behind, is enterprise SaaS and professional services, where the unit economics of customer support, sales operations, and engineering velocity are reaching the same forcing functions. The third wave — regulated industries and legacy enterprises — faces a longer timeline but no exemption. The World Economic Forum’s February 2026 analysis on AI governance found that organizations in regulated sectors that are pulling ahead are doing so by investing in governance infrastructure that enables agentic deployment, which means the restructuring pressure is reaching them through a different door.

The Warning

The ideology is coherent. The structural pressures are real. The path Silicon Valley CEOs are walking down is not arbitrary; it follows from a shared diagnosis of what is broken and a shared belief about what works in its place. Whether that diagnosis is correct in every case is a question that will resolve itself over the next three to five years inside the financial statements of the companies making this bet. The relevant point for any executive team or workforce reading the Armstrong letter is that the same letter is going to arrive at their company on a timeline set by forces above the level of their own leadership team’s preferences.

The companies that handle this transition with their cultural integrity intact will be the ones honest about what is happening. They are not cutting costs to survive a downcycle. They are restructuring around an operating model their investors, peers, and advisors believe will be the only competitive structure five years from now. They are betting that the humans who remain — concentrated in judgment-intensive roles, working with AI as the substrate of execution — are doing work that compounds. The bet is unproven at scale. It is being placed anyway, because the cost of not placing it is structural irrelevance.

The employees affected by these restructurings did not make these decisions. They are bearing the cost of an investment thesis they had no role in setting, on a timeline determined by competitive and capital pressures none of them control. Leadership teams that say so directly — that distinguish their actions from cyclical right-sizing and name them as a structural redesign driven by external pressure — will keep the trust of the workforces that remain. The teams that present this as routine cost management will not.

The hardest thing to say to the people inside these organizations is also the most accurate: the professional calculus that governed the last decade of technology careers no longer applies. Hours worked, features shipped, commits logged, sprints completed — these were metrics in an economy where human labor was the primary unit of organizational output. The operating model Armstrong describes, and that this letter will help normalize across Silicon Valley, is built on a different unit. It evaluates people not by how much they did, but by how much the organization moved because of the quality of their judgment.

The organizational model that scaled through the 2010s — functional depth, specialist roles, managerial ladders, team size as a proxy for scope — is what the Armstrong letter and the letters that follow it are explicitly dismantling. The era of tech that most working professionals in this industry were trained for is ending in real time. The transition underway is from volume-oriented contribution to impact-oriented work, and the two require fundamentally different relationships with the job itself. Impact-oriented work is less legible, harder to demonstrate through activity, and demands the ability to articulate what changed because you were in the room — not just that you were in the room.

Any claim about exactly what the winning organizational design looks like five years from now is ahead of the evidence. What is clear is that the transition has started, the competitive pressure behind it is real, and the window for deliberate individual repositioning is open now and will not stay open indefinitely.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

Context Models Are the Unlock to Consistent AI Value

The AI Job Apocalypse Won't Happen. Here's What Will.

The UX Researcher's Guide to Claude, Claude Cowork, and Claude Code

The 10% Problem: AI's Value Gap Is Wider Than Anyone Is Admitting

Stanford's AI Index Proves the US Can't Buy Its Way to an AI Lead

Stanford's 2026 AI Index Just Dropped. Here Are the Numbers Product Leaders Need.

Product Impact Newsletter

AI product strategy delivered weekly. Free.