The Browser Is the New Battleground: How AI Is Moving Out of Chat and Into Your Life

Google killed the Chromebook and replaced it with the Google Book. Here's what it can do, why Google made the swap, and what the move signals about the next decade of AI.

- ● Three capabilities deserve attention because they change what a browser is.

- ● The Chromebook was a great device for the browser era.

- ● The Google Book is just one example amongst many taking a bet.

- ● For most knowledge workers, ChatGPT and Claude are already the default AI tools.

- ● By 2030, the way we used AI today will look the way dial-up internet looks today.

The most interesting thing Google did this week was not release a new model. It was kill the Chromebook. In its place is the Google Book, a device with the browser still at the center but with AI baked into how you select, summon, and act on what is on your screen. Shake the device and the AI shows up. Highlight three different things across a page — a chart, a paragraph, a video clip — and the AI works on all of them at once. Open a flight aggregator and it offers to track the cheapest option for you.

None of that is revolutionary on its own. Add it up and the shift is real: the AI is no longer something you visit in a tab. It is something that operates inside the page you are already on. That is a much bigger deal than another model release.

What Is the Google Book Actually Capable Of?

Three capabilities deserve attention because they change what a browser is.

Shake-to-invoke. A physical gesture summons the AI from anywhere — any tab, any page, any moment. No keyboard shortcut, no menu, no separate app to open. The gesture matters because it removes the single biggest piece of friction in current AI use: stopping what you are doing, switching to a different surface, and typing in a question. Friction kills habits. Removing it builds them.

Multi-object selection. You can select multiple, unrelated things on a page — a paragraph, a chart, a video clip, a comment, a price — and treat them as one input to the AI. Today, asking AI to compare a competitor’s earnings transcript with their stock chart and an analyst’s tweet means three copy-pastes and a long prompt. On the Google Book, you select all three at once and ask a single question. Multi-object selection is the difference between AI as a tool you wrestle with and AI as a tool you reach for instinctively.

Contextual action suggestions. The Google Book watches what is on the screen and proposes useful actions before you ask. Reading a contract and it offers to flag any clauses different from your last one. Comparing flights and it suggests holding the cheapest option and re-checking the price in three hours. Reviewing a long email thread and it offers to draft a reply that addresses the questions you missed. The AI starts working on the right thing without you needing to explain what the right thing is.

Each of these is small on its own. Together, they replace the dominant pattern of consumer AI — open a chat window, paste context, ask a question, copy the answer back — with something that feels like the AI is already standing next to you in the page.

For the average user, the experience is supposed to feel like the browser got smarter without you having to do anything different. The ambition is correct. The harder question is whether changes like these are finally enough to convert the silent majority of consumers who barely touch AI today. Even in 2025, the Reuters Institute’s Digital News Report found only about 7% of US adults use ChatGPT on a daily basis — meaning the vast majority of consumers still treat AI as something occasional, not as a regular part of how they get things done. Will removing the friction of opening a chat window finally tip the next wave of mainstream users?

Why Did Google Kill the Chromebook?

The Chromebook was a great device for the browser era. It treated the browser as the operating system because that is where the work was — in Google Docs, Gmail, web apps. The Chromebook was unbeatable at that one thing.

The AI era needs a different kind of device. Not because the browser stopped being important — it is more important than ever. The way people use the browser is changing. The browser is no longer a passive window onto the web. It is an active workspace where the AI is meant to be one of the inputs alongside the keyboard and the mouse. A Chromebook was built around the assumption that you typed and clicked. The Google Book is built around the assumption that you shake, select, accept a suggestion, or ask.

Google are saying the next decade of computing is about turning the web browser into your workspace, except with an AI agent jumping in as needed.

Others have different perspectives on what the next evolution of AI will look like. Apple is moving aggressively on on-device AI and spatial intelligence. Microsoft has Copilot embedded across Office, Edge, and Windows. Many other challengers believe that wearables will be the key to collecting the context necessary about our day-to-day to make AI useful to everyone.

What Other AI Products Are Changing How We Work?

The Google Book is just one example amongst many taking a bet.

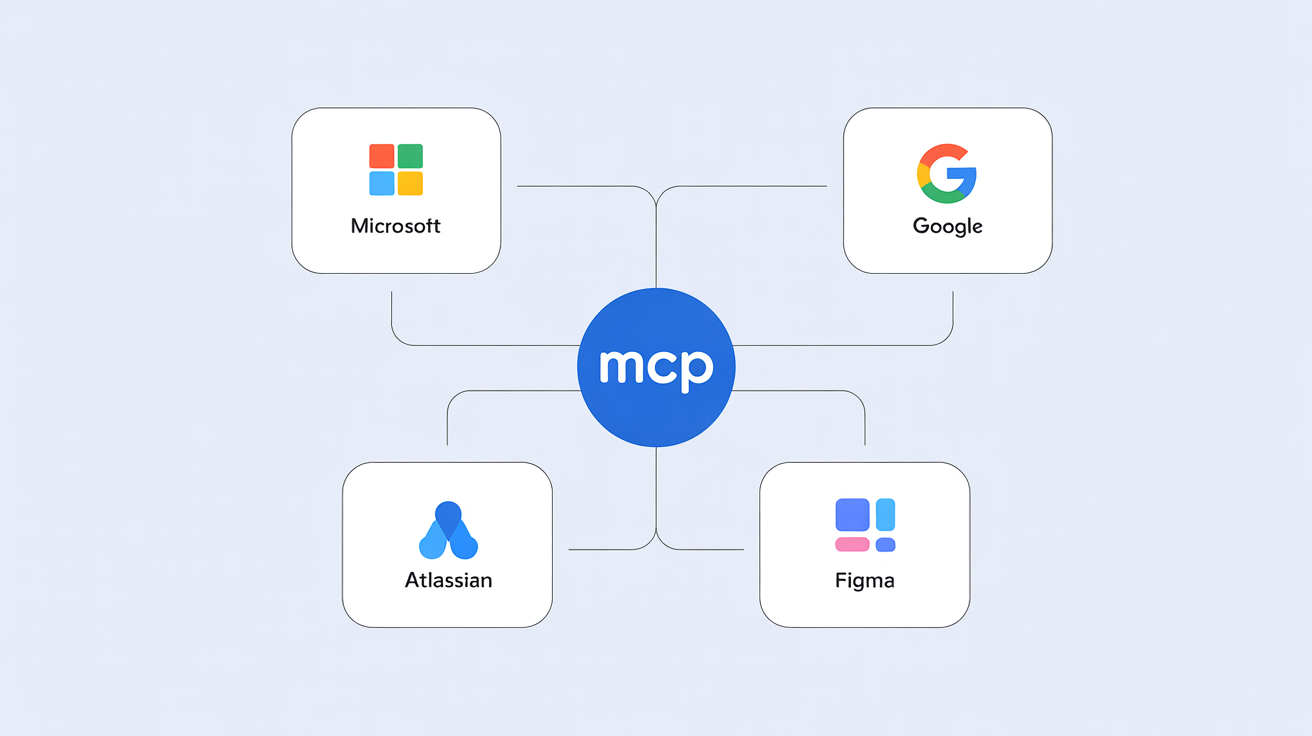

Atlassian’s Dia browser, built on the company’s 2025 acquisition of The Browser Company, is reorganizing what tabs, sessions, and context mean inside a browser. Dia is looking to re-invent browsing for knowledge workers by making your tasks and context easier to access.

Manus and (Perplexity Computer) are leaning into computer use — enabling LLMs access to browse, click, and manage on our behalf. The goal is to give LLMs tasks to do. The risk is that the capability is still quite new.

Outside the browser, a wave of physical AI products is trying to capture context from the real world rather than from the screen. Meta’s Ray-Ban glasses, can see what you see and hear what you hear, surfacing AI without a tap. Plaud’s NotePin and Limitless AI’s Pendant capture every conversation around you and synthesize it into searchable notes. The most anticipated entry is still ahead: the device being co-designed by Sam Altman and Jony Ive after OpenAI’s 2025 acquisition of Ive’s studio io, alongside Apple’s first generation of smart glasses expected to ship in 2026 or 2027. The bet is that the more an AI knows about your actual day, the more useful it can be in it.

The intent is clear but the path to success isn’t. The reality is that there simply aren’t signals yet about what it will take for consumers to overwhelmingly pick one device another like they did a generation ago when the iPhone was released.

What Does This Mean for How We Use ChatGPT and Claude?

For most knowledge workers, ChatGPT and Claude are already the default AI tools. But there’s probably an expiry date coming soon for expecting all questions and work to happen in a chat interface.

The companies running ChatGPT and Claude understand this. Both are racing to build their own browser-embedded experiences, agent platforms, and computer-use capabilities. The destination chat experience is not going away, but it is no longer the only product. The next era will be about which AI makes our lives better, something that the fonrtier models have yet to solve.

Looking Back From 2030, What Will This Era Look Like?

By 2030, the way we used AI today will look the way dial-up internet looks today. The capability was already meaningful but the form and functions basic. We’ll recognize that for the average consumer using LLMs relied on the human copying and pasting so that they could get anything meaningfully done.

The defining inefficiency of this era was that we used a chat window as the universal input for every kind of task. Chat is the right tool for long, exploratory conversations.

The AI war of 2025 was about which model you talked to. By 2030, the question will be which AI gets to stand next to you in your work, and how naturally it does it.

Arpy Dragffy is an AI product strategist, CEO of ph1.ca, and host of the Product Impact Podcast. His work focuses on solving AI adoption barriers and creating KPIs for success.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

Context Models Are the Unlock to Consistent AI Value

What UX Research Looks Like When Context Becomes the Engine

Team Work Is About to Transform and Atlassian Is Leading the Charge

The Cognitive Shift Every UX Researcher Needs to Make

Meta Is the Cautionary Tale About AI Every Founder Needs to Remember

The Free Ride Is Over: AI Economics Is Now Your Most Important Strategy Decision

Product Impact Newsletter

AI product strategy delivered weekly. Free.