Playbook for Knowledge Workers to Survive the AI Jobpocalypse

What the job market actually looks like for knowledge workers, why your expertise is invaluable but your capabilities have been commoditized, and four operational paths through it.

- ● Stanford’s 2026 AI Index reports a 20% employment decline for software developers ages 22–25 since 2024, one in three employers expecting workforce reductions in the next year, and AI skill mentions in US job postings up 55% year over year.

- ● The layoffs are the visible part.

- ● The uncomfortable truth most senior knowledge workers are still adjusting to: the work you trained for over a decade — the deliverables that made you employable, the production capability that defined your seniority — has been commoditized in eighteen months.

- ● Heavy AI use degrades cognitive engagement.

- ● The current state of knowledge work substitution breaks into three bands.

The AI jobpocalypse is not a forecast. It is the operating environment.

Stanford’s 2026 AI Index reports employment for software developers ages 22–25 down 20% since 2024. Anthropic CEO Dario Amodei has warned that 50% of entry-level white-collar work disappears in three to five years. Brian Armstrong’s May 2026 Coinbase memo gave the layoff template the next 100,000 reductions will use whether or not other CEOs read the original.

Most career advice in this market is one of two things: dismissive (build AI skills, become irreplaceable, ride the wave) or apocalyptic (the work is over). Neither is useful at the level a senior knowledge worker needs to operate.

This playbook is for senior knowledge workers — UX researchers, digital marketers, project managers, agency account managers, educators, designers, and the adjacent roles that share their structural position — who need to act on the reality of the next eighteen months. It does not assume the layoffs are reversible. It assumes you are positioning for what comes after.

The Job Market for Knowledge Workers Has Already Shifted

Stanford’s 2026 AI Index reports a 20% employment decline for software developers ages 22–25 since 2024, one in three employers expecting workforce reductions in the next year, and AI skill mentions in US job postings up 55% year over year. Pave’s Data Lab found that “the percentage of employees between the ages of 21 and 25 has been cut in half at large public technology companies over the last 2.5 years” — from 15.0% in February 2023 to 6.7% in August 2025. The 2026 update is expected to look worse once it publishes. The pipeline below mid-career is thinning faster than the layoff coverage suggests.

What the Executives Are Saying

The executive statements are unambiguous. Dario Amodei told Axios in May 2025 that AI could eliminate half of all entry-level white-collar jobs within one to five years. Brian Armstrong’s May 2026 Coinbase memo described the company as “rebuilding Coinbase as an intelligence, with humans around the edge aligning it” while cutting 14% of staff. Tobi Lutke’s April 2025 Shopify memo made AI fluency a hiring and promotion requirement and instructed managers to prove a task cannot be done by AI before requesting additional headcount. Marc Benioff told Bloomberg he was not hiring engineers because Agentforce was delivering 30% productivity gains. These are not forecasts — the executives are operationalizing displacement.

McKinsey’s State of Organizations 2026 reports 32% of organizations expect AI to reduce total workforce by 3% or more in the next year, up from 25% the year before. Brynjolfsson, Chandar, and Chen’s 2025 “Canaries in the Coal Mine” from the Stanford Digital Economy Lab identified the key pattern: employment effects front-run the capability advances. Jobs restructure before the automation arrives.

Anthropic’s March 2026 Economic Index reports that roughly 49% of US occupations have had at least a quarter of their tasks performed with Claude, and AI is spreading into the judgment layer, not concentrating at the execution base.

LinkedIn’s January 2026 Labor Market Report shows a 70% year-over-year increase in US roles requiring AI literacy, with hiring concentrated at AI-native companies, professional services firms, and organizations actively building AI products.

Job security for knowledge workers in 2026 is no longer an outcome of role longevity or seniority. It is an outcome of how well your specific capability profile maps to the layer of work organizations now want humans doing.

The Org Charts Are Being Redesigned, and Job Descriptions With Them

The layoffs are the visible part. The redesign of the org chart is the underlying mechanism, and it is what most career advice fails to account for.

I wrote in Every CEO Will Post a Layoff Notice Like This. Here Is Why that Armstrong’s memo will be the template for the next 100,000 tech layoffs because it operationalizes a three-year-forming ideology about organizational redesign. The three structural principles:

A hard cap of five management layers. Middle-org overhead is now observable and quantifiable in ways that were previously only arguable.

No pure management roles. Judge-only managers — people who supervise but do not produce — are now observable as pure cost.

AI-native pods. Teams concentrated around people who can manage agent fleets, including one-person teams. Jensen Huang’s projection of 100 agents per human employee is the design ceiling.

Job descriptions are being rewritten to fit this model. The senior generalist who supervised three reports is being rewritten as the senior individual contributor with two AI agents. The middle manager whose job was coordination is being rewritten away entirely. The execution-heavy specialist is being rewritten as the AI-assisted version at lower headcount.

The academic literature tracks the same direction. Chalmers et al. (2026) in Journal of Management Studies argue AI’s organizational impact runs through redesigned coordination structures: the people who survive reorganizations are not the ones whose tasks survived, but the ones who became part of the redesigned coordination layer. Stelmaszak et al. (2026) frame the shift as “human-algorithm relations” becoming the basic unit of organizing.

The strategic implication is that change is inevitable across every private sector business — every industry will move at a different pace. Like when we look back at the last ten years, many of the current roles will no longer exist in a few years. The pace of change will be quicker now.

Your Expertise Is Invaluable. Your Capabilities Have Been Commoditized.

The uncomfortable truth most senior knowledge workers are still adjusting to: the work you trained for over a decade — the deliverables that made you employable, the production capability that defined your seniority — has been commoditized in eighteen months.

For research and analysis: NotebookLM ingests 50 sources and produces clean synthesis. Claude with extended thinking produces interview analyses indistinguishable from a mid-level researcher’s first draft. Perplexity Pro and ChatGPT Deep Research execute the literature reviews that took analysts a week.

For coding and engineering: Cursor and Claude Code produce production-grade software with senior-IC fluency. Devin from Cognition delivers autonomous engineering on bounded tasks. Replit Agent, v0, and Lovable build full applications from natural-language briefs. GitHub Copilot is no longer the frontier; it is the floor.

For content and design: ChatGPT, Claude, and Gemini produce campaign copy and brand voice extensions. Midjourney, Sora, and Runway handle the visual and video work that demanded production teams. Figma’s AI features generate first-draft interfaces. Canva’s Magic Studio scales brand-consistent content production for any team.

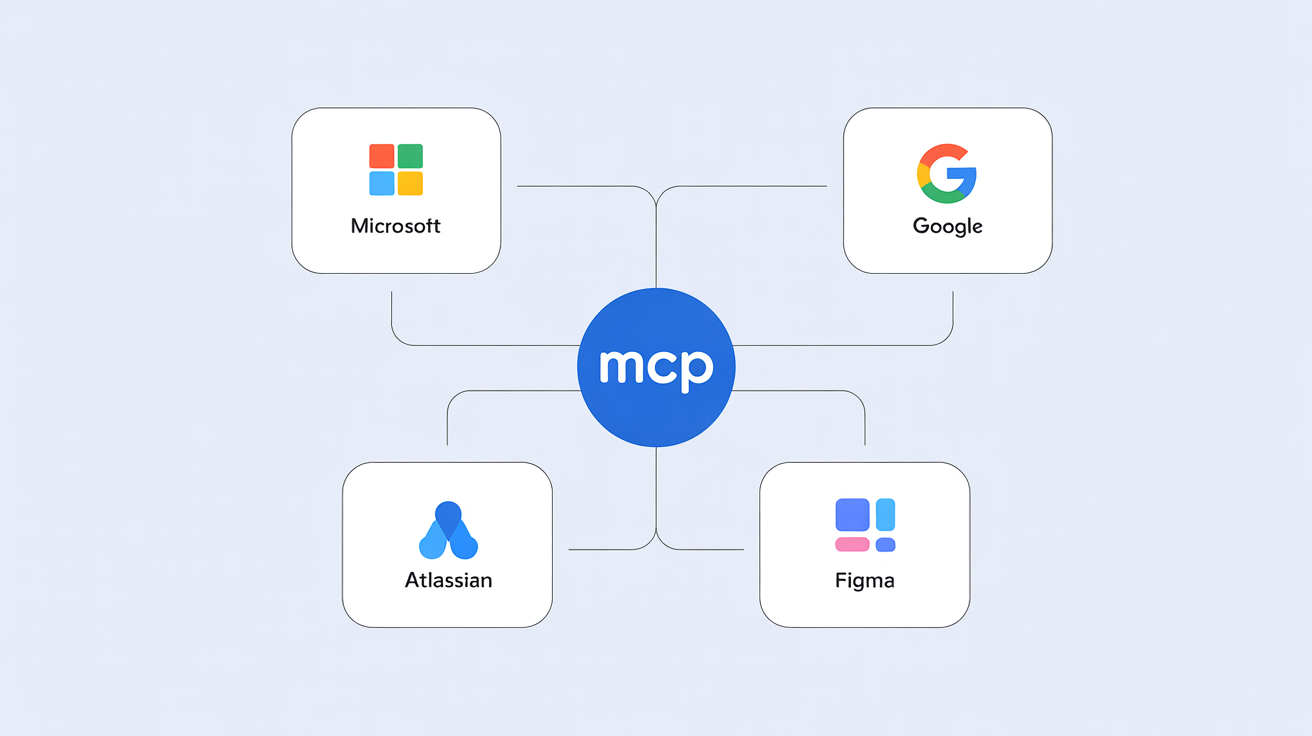

For agents and operations: ChatGPT Operator and Claude with Computer Use execute on websites. Salesforce Agentforce and Atlassian Rovo handle enterprise workflows. Manus and OpenAI’s general-purpose agents take multi-step instructions and execute end-to-end. Harvey handles legal research. Lattice and several HR platforms are deploying digital workers as employees with performance reviews.

These are production tools organizations are buying, deploying, and using to replace deliverables your job description listed last year.

The improvement curve is the harder problem. Ethan Mollick wrote in June 2023 — and formalized as Rule 4 in Co-Intelligence — that “today’s AI is the worst AI you will ever use.” METR’s March 2025 paper found the time horizon for tasks AI can complete with 50% reliability has been doubling approximately every seven months since 2019. Stanford’s 2026 AI Index reports single-year performance gains of 18.8 points on MMMU and 67.3 points on SWE-bench.

Your expertise — the judgment built across years of work — is not what got commoditized. The deliverables you produced as evidence of that expertise did. The two are not the same, and most of the panic in the senior knowledge worker community comes from confusing them.

AI Makes Us Dumber. It Also Makes Us Faster. Your Executives Have Picked.

Heavy AI use degrades cognitive engagement. Kosmyna et al. (2025) at MIT Media Lab measured EEG signals across 54 participants and found LLM users had the weakest neural connectivity; 83% could not quote from essays they had just written. The authors describe the effect as cognitive debt — unprocessed information accumulated when integration is offloaded to the model.

Lee, Sarkar et al. (2025) at Microsoft Research and CMU surveyed 319 knowledge workers and found higher GenAI confidence was associated with less critical thinking. GenAI shifts critical thinking from information gathering to verification, integration, and stewardship of AI output — and most workers are not yet trained for that layer.

What workers are not processing, they are not retaining. The long compounding of expertise — the slowly internalized pattern recognition that defines senior judgment — does not happen when the model produced the output. 404 Media’s August 2025 report “Software Developers Say AI Is Rotting Their Brains” captured what is showing up across Reddit, Hacker News, and developer forums: “more and more people are becoming disillusioned with the promise of code generated by large language models … developers who use AI at work report that they feel like they are de-skilling themselves and losing their ability to do their jobs as well as they used to.”

The countervailing evidence is just as real for the workers it applies to. Dell’Acqua, Mollick et al.’s Jagged Frontier study (2025) found consultants inside AI’s capability zone completed 12.2% more tasks 25.1% faster at higher quality. Microsoft’s 2026 Work Trend Index puts 80% of “Frontier professionals” producing work they could not have produced a year earlier. Anthropic’s Economic Index and Atlassian’s State of Teams research report the same split: a minority extracts dramatic gains, most extract modest gains, a meaningful subset perform worse with AI than without.

The split is not about effort. The Jagged Frontier finding holds inside the model’s capability zone, and the workers who land inside it consistently are the ones who can think abstractly and systematically — work most knowledge workers were never explicitly trained for. That re-wiring of how to think does not come naturally to most people.

The executive calculation runs on top of that uncertainty. Investors and customers have set unrealistic expectations for AI productivity, and CEOs are under quarterly pressure to demonstrate AI maturity relative to peers. The operating decision is not whether AI delivers consistent gains — the evidence is genuinely unclear — but whether the organization is becoming visibly more AI-mature than its competitors. That pressure forces decisions that bring the pain sooner, in the expectation the gains come sooner too.

The risk inside that bet: a meaningful portion of the workforce cannot become AI-native, not for lack of effort, but because effectively working with AI — and especially with autonomous agents — requires a re-wiring of how to think that is genuinely uncomfortable for most people. The system pressure does not account for that.

The Skills Matrix: What AI Has Commoditized, and What It Hasn’t

The current state of knowledge work substitution breaks into three bands.

What AI can do very well: - First-draft production from structured inputs - Templated deliverables and routine reporting - Synthesis from clean data - Code generation for well-specified requirements - Meeting transcription and summarization - Campaign copy and ad variants - Slide deck production

What AI can do adequately: - Designing a marketing asset, website, or report cover - Searching for data and code across several tables and databases - Multi-modal data synthesis from messy real-world inputs - Audience segmentation modeling - Resource allocation planning - Brand-consistent content production at scale - Drafting status updates that reflect cross-team dependencies

What AI struggles with: - Designing original and powerful creative - Assessing which result is authoritative when several exist - Evaluating the intent behind decisions - Brand judgment grounded in institutional memory - Reading a market moment across cycles - Stakeholder navigation when two senior sponsors want contradictory outcomes - Ethical and values-based decisions that require visible human accountability

Every six months, items shift up a band. To stay current, check Cubit by Maiden Labs for capability tracking, Anthropic’s Economic Index for what tasks the market has actually shifted onto AI, and the Stanford AI Index for the broader landscape. As I wrote in Enterprise Context Is the AI Moat Nobody Built, the durable boundary is the judgment that requires institutional context, continuity, and accountability — and these the model structurally lacks.

Find Your Competitive Advantage: Think of Yourself as a Product

The senior knowledge workers who are pricing well in this market — whether inside organizations or as independents — have stopped thinking of themselves as a role. They have started thinking of themselves as a product.

The product framework has four stages, and they apply directly to a career.

Find your exceptional capabilities. Not the ones in your job description — the ones where colleagues route the hard questions to you, where the outcome is attributable to your judgment rather than to following a process. What do you do that stakeholders repeatedly say they cannot get elsewhere?

Amplify them. Tacit capability gets cut in reorgs because it is invisible to decision-makers. The research design that caught a two-year-old assumption is career infrastructure — but only if it is attributable to you in a form another person could point to. Amplification runs through documented outcomes, repeated demonstration in high-stakes contexts, and explicit positioning in the narrative about what your function does.

Augment with technology. The objective is leverage on your exceptional capability, not the addition of AI to your existing process. A research lead who can supervise three concurrent studies because AI is processing the transcripts is more valuable than the same lead doing one manually. A marketing director who can ship across eight channels because AI is producing the variants under their brief is more valuable than the same director shipping across two. The augmentation is the multiplier, not a substitute.

Find the ideal audience. Markets pay differently for the same capability. Which buyers value this capability most highly, in what context, at what stage, with what willingness to pay? The audience that values brand judgment is not the audience that values cost optimization. The audience that values disconfirmation discipline in research is the one making high-stakes product bets, not the one running incremental optimization. Finding the audience is not separate from defining the product.

What This Looks Like for UX Researchers, Digital Marketers, and Project Managers

UX researchers. Research has been marginalized in many organizations because the function drifted toward managing tools rather than owning product outcomes. That is the drift to reverse. Your core skill — converting customer context into decisions that reduce organizational uncertainty — travels far beyond the traditional research function. AI can execute the study but cannot determine whether it was the right study to run. It codes the transcript; it cannot understand why a participant behaved the way they did.

The move is from the assembly line of deliverables to the boardroom of bets. Organizations making high-stakes product decisions are betting on user behavior. The researcher who frames the right question and designs the study that could disprove the hypothesis is doing something the model cannot replicate.

Contrarian take: Evals are the next evolution of the usability test. As AI systems proliferate inside organizations, someone has to design and run the tests that determine whether AI behavior matches intended outcomes. That someone should be the person who has spent years testing human behavior against stated intent. The researchers who develop evaluation design as a core skill now are positioning for a function that does not yet fully exist but will be mandatory within two to three years.

Digital marketers. Marketing was the most data-concentrated knowledge work function before the AI wave, which makes it simultaneously the most automated and most competitive field to navigate. The senior practitioner who knows which tools compound versus which produce vanity metrics has structural advantage over whoever is running the previous cycle’s stack.

Your primary weapon is proving what works faster than your competitors. That requires the clearest model of attribution, the highest confidence in the metrics that drive revenue, and the ability to read customer intent in data rather than at surface level. The scale tools are commodities. The interpretation layer is not.

Contrarian take: Go-to-market is the hardest skill in a market where building is essentially free. If you can take something to market and make it win, that capability is more scarce than any technical execution skill. The strongest move for experienced digital marketers is to apply GTM expertise directly — to their own projects or as independents — building distribution, audience, and proven market intuition that no employer can replicate through internal optimization.

Project managers. This is the role most directly in the path of AI adoption in 2026 and 2027, and it requires the most honest assessment. Context graphs and AI-native workflow tools will absorb the majority of traditional PM work — status tracking, dependency mapping, documentation overhead, meeting coordination. The organizations that navigate 2027 coherently will be those that moved internal context out of scattered Confluence pages and Slack threads into structured, queryable systems. That work is not owned by anyone yet.

The PMs who reposition as owners of context and organizational knowledge — auditing and governing the institutional memory that AI systems need — will have leverage over the entire operational output of their organizations. The capability transfer is direct: you already know how processes connect and where decisions go undocumented.

Contrarian take: Project management is among the hardest-hit roles in the AI restructuring. For those who do not want to make the context management pivot, move before the restructuring arrives rather than after. PM relationships, process expertise, and operational pattern recognition transfer well into operations, product operations, and chief of staff roles — anywhere coordination complexity is still human-intensive.

The senior knowledge workers who survive this market do not have generic versions of their function. They have a specific product, amplified through visibility, augmented through technology, positioned for an audience that values it.

Four Paths to Surviving a Tough Time for Knowledge Workers

Once the competitive advantage is identified and the audience defined, the operational question is which path you execute. The four paths below are the realistic options for senior knowledge workers in 2026. None is universally right. Each is matched to a different combination of organizational reality, market position, and personal infrastructure.

Path 1: Navigate the reorg from inside. Viable when your organization is genuinely redesigning rather than reducing, and when you can position yourself in the judgment layer before the restructuring assigns you elsewhere. Prerequisites: your direct manager has standing inside the redesign process, and your function’s durable core is valued as human in the organization’s new logic. Make the interface role explicit before someone else defines it. Reorgs assign new roles to whoever showed up with a plan.

Path 2: Move to a better-positioned organization. Viable when your specialty is healthy in the broader market but your current org is restructuring in ways that do not need your function’s durable core. The roles worth targeting are those where the job description shows the function has been redesigned — not just labeled “AI-enabled” over an unchanged set of responsibilities. Senior practitioners with current AI fluency and domain depth are scarce and commanding pricing power. That window closes as the next cohort develops AI fluency natively.

Path 3: Pivot to an adjacent domain. Viable when your function is contracting market-wide. The sectors actively absorbing displaced knowledge workers: healthcare (the fastest-growing major sector per BLS projections through 2034), government and civic technology, and professional services firms needing practitioners accountable for AI-supervised work. Brookings found approximately 70% of highly AI-exposed workers have sufficient transferable skills for a role shift — but the pivot must be scoped to durable capabilities, not job titles.

Path 4: Go independent. Viable when you have a real track record and network — specific outcomes attributable to you, and decision-makers who would pay for project work today. MBO Partners 2025 reports 5.6 million US independent professionals earning over $100K, up 19% year over year. Upwork’s 2026 In-Demand Skills found AI-enabled freelancers earn approximately 40% more per hour than peers using traditional methods. The prerequisite is binary: specific decision-makers would refer paying work today, or this path requires building toward that first.

Most senior practitioners find more than one path viable, or find a primary path needs a secondary path built in parallel. Use whatever runway remains in the current role to build the prerequisites for the next one. Time inside a deteriorating organization is not wasted if it is converting tacit capability into documented track record.

The AI jobpocalypse is not a one-time event you survive. It is a permanent restructuring of how knowledge work is organized, valued, and paid for. The senior knowledge workers who are doing well in 2026 are not the ones who avoided AI or trusted it fully. They are the ones who understood their specific competitive advantage, made it visible, augmented it appropriately, and positioned for the audience that values it most. That work does not finish. It becomes the operating discipline of the rest of your career.

Arpy Dragffy is an AI product strategist, CEO of ph1.ca, and host of the Product Impact Podcast. His work focuses on solving AI adoption barriers and creating KPIs for success.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

Stanford's AI Index Proves the US Can't Buy Its Way to an AI Lead

Stanford's 2026 AI Index Just Dropped. Here Are the Numbers Product Leaders Need.

What AI Does to Human Thinking: Cognitive Sovereignty, the Median Pull, and Why It Matters for Product Teams

The Browser Is the New Battleground: How AI Is Moving Out of Chat and Into Your Life

Context Models Are the Unlock to Consistent AI Value

What UX Research Looks Like When Context Becomes the Engine

Product Impact Newsletter

AI product strategy delivered weekly. Free.