What UX Research Looks Like When Context Becomes the Engine

The role is moving from a step that produces decks to infrastructure that powers everyone else's AI. Both halves of that sentence are going to be hard.

- ● Here is roughly how my own working setup has shifted in three years:.

- ● Picture your company’s collective knowledge as a dot-connecting diagram.

- ● UX research is at a turning point that looks different to a research leader than to a practitioner, and most of the public conversation collapses them into one argument that does not serve either audience.

- ● Research outputs are some of the highest-quality context any company has.

- ● The role data was already moving in the wrong direction before this shift, and the shift accelerates the pattern.

Part Three of the UX Researcher’s AI Toolkit. The role is moving from a step that produces decks to infrastructure that powers everyone else’s AI. Both halves of that sentence are going to be hard.

In the first five months of 2026, the substrate UX research depends on changed shape fast.

Anthropic, Google, OpenAI, and Meta all shipped models that hold a year of company documents in working context. Atlassian shipped its Teamwork Graph at Team ‘26: 150 billion connections, agents grounded in it producing 44% more accurate answers at substantially lower cost. Lovable shipped its MCP server. Microsoft’s 2025 Future of Work report shows 35.9% of US workers now using generative AI.

While everyone working in UX is feeling threatened by layoffs, the foundations beneath their feet are shifting faster than we all understand. We used to worry about delivering decks that get lost in a drive somewhere; now we quite literally might be one of the most valuable contributors to making AI systems valuable.

Part One covered tooling. Part Two covered cognitive shifts. This piece is structural.

The speed of change since ChatGPT

Here is roughly how my own working setup has shifted in three years:

- 2023: Explored how useful and generally terrible ChatGPT was at summarising content and answering complex research questions. Treated it as scaffolding to verify, not analysis to ship.

- 2024: Started using Claude Projects and Gemini Gems to make responses more consistent through curated briefs, examples, and a coding taxonomy.

- 2025: Started learning Claude Cowork and Claude Code to augment my capacity through reusable templates and cross-project context.

- 2026: Unlocked compounding returns by orchestrating it all together: a GitHub repository of prior work, agents for recruitment, interview guides, analysis, synthesis, and report writing, and skills that hold my voice and each client’s brand.

A study that took three weeks in 2023 now takes two days at better quality, and I’m charging for insight rather than hours.

What knowledge graphs and MCP actually mean

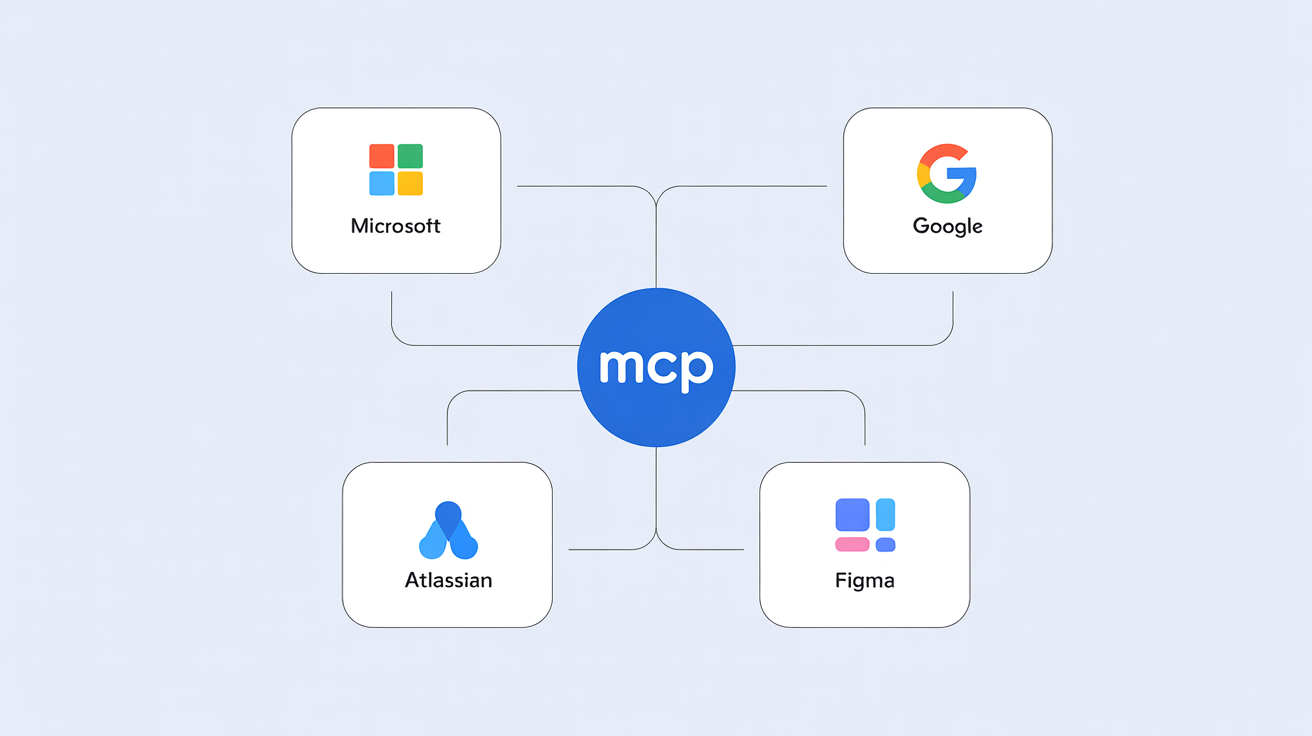

Picture your company’s collective knowledge as a dot-connecting diagram. Each dot is a person, project, customer, finding, or decision; each line is the relationship between them. That diagram is a knowledge graph, and the company that builds it well becomes the company whose AI agents stop hallucinating. Atlassian shipped one in May 2026 with 150 billion connections inside it (covered in the companion piece on graphs), with agents grounded in it producing 44% more accurate answers at substantially lower cost. MCP, Anthropic’s open standard, is the second piece — the protocol that lets any tool plug into any AI agent the way any USB device plugs into any computer.

What this means for research, in practical terms: your survey results, your usability finding, your competitive insight, your verbatim from the interview where the participant changed their mind, all become directly readable by every other team’s AI agent. Your work gets used by everyone, not just the PM who commissioned it.

A turning point for UXR

UX research is at a turning point that looks different to a research leader than to a practitioner, and most of the public conversation collapses them into one argument that does not serve either audience. For leaders, the question is whether the function survives the next budget cycle by becoming the trusted insight infrastructure for the rest of the AI stack, or gets quietly dispersed because the stack found a way to run without it. For practitioners, the question is whether the role you have today exists in two years, in what shape, and at what compensation.

Why this is research’s strongest structural position ever

Research outputs are some of the highest-quality context any company has. The other sources of customer truth — support tickets, sales calls, product analytics — are messy, repetitive, or full of noise by comparison. Research has been collected with a methodology, organised, and validated.

When agents ground answers in that material, quality goes up sharply. The LangChain State of AI Agents 2026 reports every 1% increase in input context produces a 0.38% increase in output quality, and agents without good context drop from 60% accuracy on a single run to 25% across eight consecutive runs. The 32% of respondents naming quality as the top barrier to production AI are mostly describing a context problem dressed as a model problem. Research becomes the leading indicator for every other function’s AI quality.

Why the role itself keeps getting discredited

The role data was already moving in the wrong direction before this shift, and the shift accelerates the pattern.

Indeed reports UX research postings dropped 73% from 2022 to 2023. User Interviews 2025 State of User Research found 49% of researchers feel negative about the future and 21% of organisations laid off researchers in 2025. Measuring U puts UX staff reductions at 35% of organisations. The State of AI Agents 2026 reports 88% of enterprise agent pilots fail to graduate to production, with evaluation gaps and context fragmentation as the top blockers.

In 2025 you run a study and argue with the PM about what it means. In 2027 the PM works through their own AI agent and acts on the answer without you in the conversation. PMs benefit most directly from AI because pulling context together is exactly what AI amplifies, so your closest collaborators get the biggest boost from the same shift that pushes you further from their work.

Two UXR platforms and where their roadmaps are heading

MCP changes what a research platform is. Once a tool exposes an MCP endpoint, it can pull from Figma files, Confluence and Google Docs, support tickets, surveys, brand guidelines, and product analytics in the same agent breath. Platforms that use that composability expand. Platforms that don’t get reduced to one MCP endpoint inside someone else’s workflow.

Maze - 2024: Unmoderated prototype testing with AI synthesis features starting to land. - Today: AI-native testing and continuous research, with a 2025 trends report anchoring the category narrative. - 2027 with MCP: Expands into the agentic continuous-research command centre — pulling Figma design context and product analytics on top of its testing engine — or shrinks to a thin testing API called by other teams’ AI stacks.

UserTesting - 2024: Enterprise testing platform with the largest verified panel and AI insights features starting to land. - Today: AI-native enterprise research, UserZoom acquisition consolidating the panel layer. - 2027 with MCP: Expands into the human-in-the-loop service for any AI workflow in the company (Figma plugins, content tools, marketing platforms) where verifiably real human signal is the moat — as Alexis Ohanian has argued — or shrinks to a panel-access API the rest of the stack calls when it needs a real human.

The pattern: MCP either lets a platform expand the surface area it owns or reduces it to one piece of someone else’s workflow. The roadmap decision is being made this year.

What a discovery study looks like in 2025 vs 2027

2025 today. A discovery study takes four to six weeks. Panel-partner recruitment runs one to two weeks, sessions are scheduled one at a time, the researcher codes in Dovetail or a spreadsheet with AI on first pass, and synthesis takes another week or two. The deliverable is a forty-slide deck presented live, and the conversation in the room is where most of the learning happens.

2027, scenario A: research positioned as infrastructure. The same study runs in a week. An MCP-connected panel agent returns verified participants in hours, sessions run in parallel with AI moderation for structural questions and the researcher in the room for strategic moments, and synthesis flows through an agent grounded in prior research and current product context. Output lands directly inside the PM’s, CMO’s, and CS leader’s agents, and the researcher’s role compresses from execution to orchestration.

2027, scenario B: research sidelined. The PM’s AI agent skips research entirely, pulls patterns from support tickets and analytics, and ships a recommendation the team treats as good enough. Studies still happen, but they run as fast operational checks against AI-generated hypotheses rather than the strategic input that shapes what gets built. The researcher’s role narrows to running studies AI cannot automate, on a velocity treadmill set by whoever has the AI budget.

Which scenario lands is decided this year, by whether research leaders make the case for the function as trusted insight infrastructure or let the AI stack run without them.

How to position for the new role this year

UX research will be structurally more important than ever, because every function’s AI depends on the context grounding it, and the role itself will keep being discredited because what researchers historically did is exactly what AI does well.

Write your methodology down as small skills — recruiting, interview guide, analysis, synthesis, report writing. Nikki Anderson’s framework on Dscout is the most detailed public model, and the act of writing it is a methodology audit most senior researchers have never had a reason to do.

Invest in what AI cannot replicate: the instinct that an interview is going off-script, the recognition that a participant is performing rather than reporting. Steve Portigal’s Interviewing Users and Indi Young’s mental-models work are the canonical references.

The researcher mindset — connecting what people need with what an organisation builds for them — is going to be one of the most valuable mindsets in the company by 2027. Encode it somewhere a system can use it before that conversation happens above your head.

Context management for UX Researchers

If I had to pick the single skill that will matter most for UX research teams in 2026 and 2027, it would be context management. The shift the rest of this article describes — knowledge graphs, MCP-connected tools, agents grounded in organisational context — puts the work of choosing what context an AI sees, how it is structured, and how to keep it useful at the centre of what every researcher does. Employers are about to start asking teams to do this well, and most teams have not been trained for it.

The skill has two halves: understanding what context is actually available inside your organisation (research repositories, product analytics, support tickets, design files, prior decision logs), and interpreting which pieces to assemble for which agent, in which workflow, to amplify output quality and reduce token usage so the cost of running an agent stays in proportion to the value it produces.

I’m planning to launch an online course about context management for UX researchers later this year, designed for senior practitioners and research leaders. If you would be interested in testing the curriculum, I would love your input. A short signal of interest is enough:

Brittany Hobbs is COO and VP Research at PH1, CEO of AI Value Acceleration, and co-host of the Product Impact podcast. She has led research at Mozilla, Spotify, Google, BBVA, TELUS Health, and Schneider Electric across more than 300 engagements.

How helpful was this article?

Share this article

COO, PH1 · CEO, AI Value Acceleration · Co-host, Product Impact Podcast

View all articles →Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

The Cognitive Shift Every UX Researcher Needs to Make

Context Models Are the Unlock to Consistent AI Value

Team Work Is About to Transform and Atlassian Is Leading the Charge

Silicon Valley's AI Is Repeating the Social Media Mistake

The UX Researcher's Guide to Claude, Claude Cowork, and Claude Code

Meta Is the Cautionary Tale About AI Every Founder Needs to Remember

Product Impact Newsletter

AI product strategy delivered weekly. Free.