WTF is an AI-native org anyways? Let's compare Airbnb & Meta's opposing plans.

Two companies. Both convinced the future depends on AI. Wildly different strategies. What Airbnb and Meta are doing — and where they actually converge — tells you more about AI adoption than anything you'll read on LinkedIn.

- ● Meta's Q1 2026 profits hit a record $26.8 billion. Eight thousand layoffs are planned for May 20. Employees describe the mood as 'horrifically, historically low.' The combination isn't a contradiction — it's what becoming AI-native looks like from the inside.

- ● Airbnb's CHRO Iain Roberts laid out five specific moves at Stanford: markdown instead of PDFs, reusable workflow 'skills,' mining meeting recordings, leaders who personally build with AI, and dedicated organizational architects.

- ● Only 19% of AI users are in the zone where the technology is actually compounding their work (Microsoft 2026 Work Trend Index). The gap isn't aptitude — it's the organization.

- ● Both Airbnb and Meta are betting on the same future: an operating model where AI generates the bulk of the work and humans direct, judge, and decide. The split is about how to take their people there.

Three stories converged this week, and the through-line is bigger than any of them on its own.

WIRED reported that even as Meta posted its best quarter in history — $26.8 billion in Q1 2026 profit — employees describe the internal mood as "horrifically, historically low." Yahoo Finance and AOL confirmed roughly 8,000 people are set to lose their jobs on May 20. The New York Times called it directly: "Meta's Embrace of A.I. Is Making Its Employees Miserable."

Meta's stock has climbed steadily through 2026 on the strength of record ad revenue and investor confidence in the AI spending story. The financial case is being rewarded while the human cost shows up on every internal channel.

The same week, Airbnb's CHRO Iain Roberts presented at Stanford HAI on a completely different approach to the same problem. Mary Kate Stimmler's LinkedIn summary captured Roberts walking through five specific moves Airbnb is making to become AI-native — none of which involve forced metrics, layoffs, or pressure.

The contrast is the most useful thing I've come across this week on what AI adoption actually requires — two companies looking at the same future with wildly different bets on how to take their people there.

Regardless of what you read on LinkedIn, AI adoption is still a problem

The narrative around AI in 2026 is dominated by leaders who say they've figured it out. The data says something different.

Microsoft's 2026 Work Trend Index surveyed 20,000 knowledge workers across 10 markets and found that only 19% of AI users are in the "Frontier" zone where the technology is actually compounding their work. Half are stuck in the middle — using AI, but not seeing real gains. The Stanford 2026 AI Index is blunter: 88% of organizations are using AI in some form, but fewer than 10% have it working at real scale in any single function.

Where the gains do exist, they're huge. Anthropic's Economic Index from March 2026 found experienced AI users dramatically outperform newcomers, and the gap isn't about being smarter. It's about working inside a system that's been deliberately built to make AI useful. Anthropic CEO Dario Amodei has put it more starkly, warning that 50% of entry-level white-collar work will disappear in the next three to five years, with the gains concentrated among people and organizations that have already done the structural work.

The takeaway: AI adoption is an organizational design problem, not a tool problem. The people winning are inside companies that built the right conditions for AI to compound.

What is an AI-native org and why is every leader obsessed with it?

"AI-native" gets thrown around constantly, so let me make it plain. An AI-native organization has rebuilt how it works around the assumption that AI will do significant portions of the work. Not bolted on. Not as a productivity layer. Structurally — in how teams are organized, how information flows, and how decisions get made.

Leaders are obsessed with this because the ROI math hasn't worked the way they were promised. Between 2023 and 2025, most enterprises spent heavily on AI tools. What they got was narrow productivity gains in some pockets and inertia almost everywhere else. The returns are real, but they're inconsistent. And the inconsistency comes from the organization, not the model.

Companies are built on siloed information sitting in different systems, multiple internal cultures pulling in different directions, and definitions of the same thing — "a customer," "a qualified lead," "a quality output" — that vary from department to department. AI can't fix any of that. It can only use, or get blocked by, the organizational architecture it walks into.

That realization is what's driving the biggest wave of corporate re-orgs since the dotcom era. Google restructured around "AI-first." Amazon split capabilities around AI. Microsoft did the same. Brian Armstrong's Coinbase memo in May became the template for AI-driven org redesign — we unpacked why every CEO is going to post a version of that notice.

These reorganizations are trying to build context: the connected web of information, decisions, and signals AI needs to actually be useful at scale. We covered why this matters in Context Is the Unlock. Without that context layer, you've got expensive software that can't see what's going on inside your company.

Airbnb and Meta have opposing plans on how to become AI-native

Both companies have decided their future depends on becoming AI-native. They're solving the same problem in completely opposite ways.

Airbnb is retrofitting collaboratively. Roberts's five moves at Stanford:

- Replace PDFs and slide decks with markdown as the default file format

- Turn the best executive work into reusable "skills" — documented workflows the whole team can use

- Pull insights from all the meeting and town-hall recordings the company already has

- Require leaders to actually build with AI personally, not just sign the budget

- Hire embedded organizational architects to redesign workflows from the ground up

The throughline is pull. Give people better tools and better context, then redesign the work together.

Meta is forcing change through pressure. Different moves:

- Push engineers to generate significantly more code as training data for internal AI systems

- Track AI usage and token consumption at the team and individual level

- Restructure around AI output metrics instead of traditional role hierarchies

- Send the clear signal that adoption is not optional

- Use layoffs to make the transition visibly mandatory

The throughline is push. Force adoption through pressure, measurement, and visible consequences.

Analysis of Airbnb's plan: why it works for any org

Airbnb's moves aren't innovative on their own. What makes them work is they address the actual constraint to AI adoption — the structure of the organization itself — without needing fear to force it.

Markdown over PDFs and slide decks. Every PDF and locked-down doc your AI has to read costs more tokens and takes longer to process. Multiply that across every employee using AI all day, and you're paying a tax on bad file formats. Markdown is plain text — cheap to read, fast to process, and the AI doesn't have to work hard to make sense of it. The Stanford AI Index found that 81% of organizations blocking AI scaling cite data constraints, mostly format problems. Switch the default file format and you fix it. Anthropic's documentation on prompt caching shows the difference can cut token costs by up to 90%. That's real money for any team running AI at scale.

Reusable skills. A skill is a documented workflow the whole team can use. Once you've built one — say, the best version of "how we write a client proposal" or "how we generate a marketing brief" — every person in that function gets to operate at that level without reinventing it. The Anthropic Economic Index found the performance gap between experienced and new AI users is explained by practice inside structured systems. Skills are how you give everyone that practice without waiting years.

Mining meeting recordings. Your company has hundreds of hours of recordings from meetings and town halls. Pre-AI, those were unusable except for whoever was in the room. Now they're a goldmine. You can see where leadership's stated priorities are actually landing with execution. You can spot the gap between "what we said we'd do this quarter" and "what we're actually working on." Microsoft's Work Trend Index found 31% of knowledge workers are stuck in what it calls "communication debt" — people who can't tell what actually matters because the signal got lost somewhere between leadership and them. Meeting transcripts surface that gap automatically.

Leaders building with AI personally. This is the biggest predictor of whether AI adoption works at all. When managers actually use the tools — not just talk about them — employees report a 17-point increase in the value they get from AI and a 30-point boost in trust in the company's AI direction. Leaders who delegate adoption don't develop the instincts to know what needs to change.

Analysis of Meta's aggressive plan: why they're pushing so hard

From the outside, Meta's approach looks brutal. From inside Meta's situation, it makes sense for reasons most people aren't talking about.

Meta is building toward something that requires more than just adoption: it needs training data at unprecedented scale, all of it specific to Meta. Generic models don't know what good engineering looks like inside Meta's systems. They don't know what a quality decision looks like in Meta's culture. Building an AI system that actually works for Meta means every engineer needs to be actively generating code, decisions, and workflows that train the model against Meta's own standards.

Mark Zuckerberg said on Meta's Q1 2026 earnings call that he expects AI to write the majority of Meta's code within 18 months. Yann LeCun, Meta's Chief AI Scientist, has described the goal as AI systems that reason through complex engineering problems with minimal human intervention. That ambition requires aggressive adoption right now, not gradual rollout over years.

Meta is also carrying the weight of its last big strategic bet. Reality Labs — its metaverse division — has lost more than $50 billion since 2019, with $16.1 billion gone in 2023 alone. The metaverse never materialized at scale. We've covered the strategic pattern behind those missteps. The pressure for AI to land differently is real, and it's shaping how hard Meta is pushing its people.

"Everyone is unhappy," one Meta employee told reporters. WIRED described the internal mood as "horrifically, historically low" — even as the company posted record profits. The employees who are miserable aren't miserable because Meta is being cruel. They're miserable because the transition to a fundamentally different way of working is genuinely painful when the new system hasn't proven itself, and high performers under the old metrics are getting cut anyway. Meta has decided that cost is acceptable for what they're trying to build.

Where Airbnb and Meta actually converge

The surface contrast looks total: collaborative versus coercive, retrofit versus rebuild. Underneath, they've reached the same conclusion.

Both companies are building toward an operating model where context is centralized and accessible to models, intent is machine-readable, work is designed around AI-human collaboration instead of human-first processes with AI bolted on, and the volume of work generated by AI is dominant rather than supplemental.

Listen to what their leaders actually say.

Roberts at Stanford: "We're training models on our best work so the median person can operate at the gold standard." Zuckerberg on Meta's Q1 earnings call: "We expect AI to write the majority of our code within 18 months." LeCun in recent public talks: AI systems should reason through complex engineering problems with minimal human execution overhead.

Three statements from companies that look like opposites — all describing the same destination.

The difference is the path. Airbnb is pulling people there. Meta is forcing it. Both are saying their future is a system where AI does the bulk of generating, and humans direct, judge, and decide.

What an AI-native org might look like in 2027 and 2028

The people building it have been clear about where it's going. Andrej Karpathy coined "vibe coding" and believes that "Software 3.0 (prompting/guiding AI agents)" will require an entirely new mindset and set of capabilities. The expectation is that AI will build and connect the infrastructure for us. Marc Andreessen and a16z have framed the broader shift as every knowledge worker becoming a directing intelligence on top of AI execution. Six shifts will define what AI-native looks like at scale.

-

Software gets built by smaller teams, faster. Boris Cherny, who leads Claude Code at Anthropic, describes a model where one engineer handles what once required three — AI takes implementation, the human takes intent and direction. Zuckerberg's 18-month timeline for AI-generated code isn't a distant forecast. Across the industry, it's already in motion.

-

Each person operates at a higher ceiling. When the best workflows are documented and reusable — the way Airbnb is doing with skills — the whole team runs at the level of whoever built them. The gap between your top performer and your median performer narrows. Expertise stops being hoarded in individual heads and starts compounding across functions.

-

Data-driven decision loops become standard for every knowledge worker. What marketing teams have today — A/B testing, real-time analytics, measurable feedback on every change — extends to every function. Every knowledge worker gets the same ability to test, measure, and iterate on decisions that a marketer has had for the last decade, which means a lot less guessing across the org. Strategy becomes evidence-based by default, not by exception.

-

Organizational silos become the most visible bottleneck. When AI is doing significant work, the places where information doesn't flow become impossible to ignore. You can't hide a broken handoff between marketing and product when the model keeps asking for context that doesn't exist. The silos that always slowed things down will surface as the primary drag on AI performance.

-

How work gets done shifts from execution to direction. Reporting becomes "here's what happened — show me the pattern." Planning becomes "here's what we're trying to do — generate options." The skill that matters most is being precise about what you want, not fast at producing it. Human contribution moves up the abstraction layer.

-

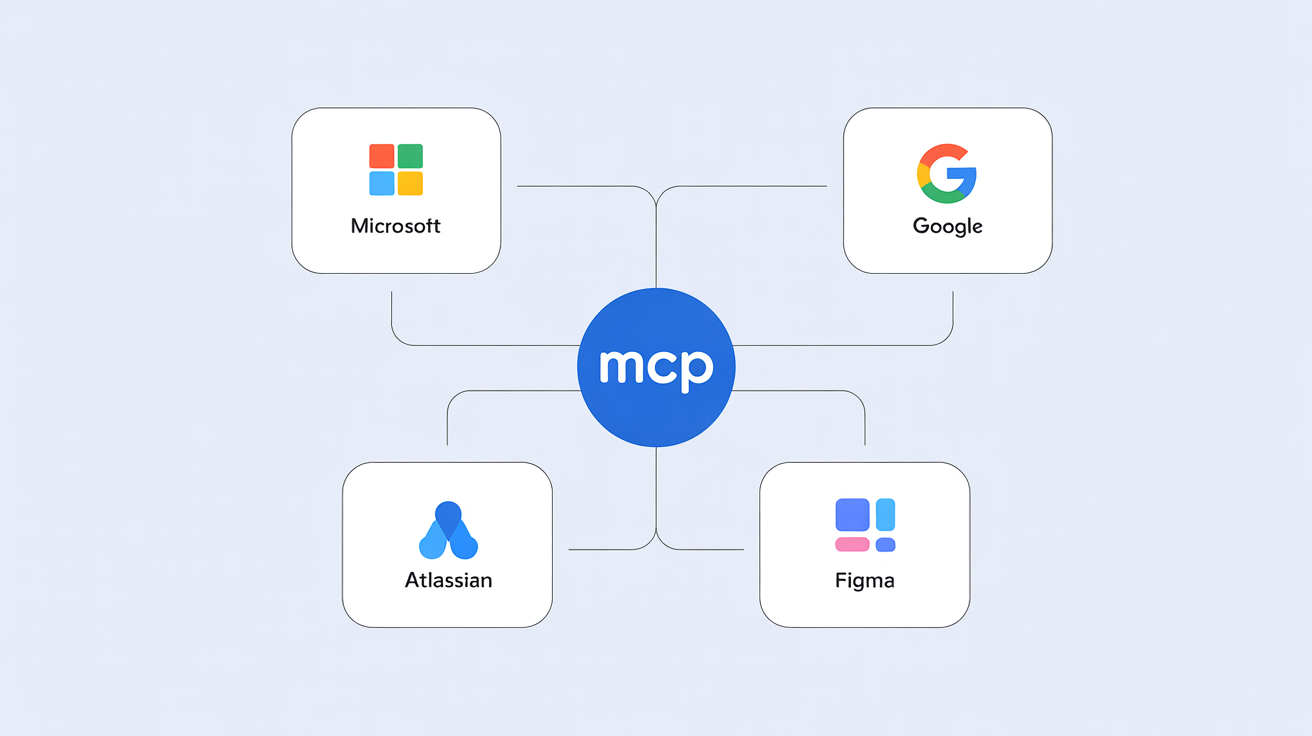

Organizations that leverage context at scale pull away from those that didn't. If your organization doesn't have its own proprietary context model connected to your most important platforms — Microsoft, Google, Salesforce, Atlassian, etc. — you will be flying blind. Ensuring every employee is connected to context means they're skipping the learning curve on what to do, why, and what outputs need to look like. They can focus on the work that actually matters.

If you're thinking about what this transition means for your own role, the Knowledge Worker Playbook lays out the personal version of the decision. For the framework on how leadership is actually executing these reorganizations, the CEO Layoffs Notice piece breaks down the pattern.

What separates winners and losers is whether the organization was built to use what AI can do. The models keep getting more capable. Orgs that haven't done the structural work won't be able to take advantage of any of it.

Brittany Hobbs is founder of AI Value Accelerator. She's a researcher and analyst answering the questions every leader is wrestling with right now — how AI adoption actually happens, and how to empower teams to actually succeed with it.

How helpful was this article?

Share this article

Hosted by Arpy Dragffy and Brittany Hobbs. Arpy runs PH1 Research, a product adoption research firm, and leads AI Value Acceleration, enterprise AI consulting.

Get AI product impact news weekly

SubscribeLatest Episodes ›

All episodes![10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1778509205960-969ac3448b77.jpg)

10. Why Most AI Customer Experiences Fall Flat [Rikki Singh, Twilio]

![9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]](https://d3t3ozftmdmh3i.cloudfront.net/staging/podcast_uploaded_episode/40697999/40697999-1777567130534-4b02f2a922863.jpg)

9. Shipping AI Fast Without Breaking Everything [John Willis, 6x author]

8. The Most Important Data Points in AI Right Now

7: $490 Billion in AI Spend Is Delivering Nothing — Orchestration Is the Fix

6. Robert Brunner Was the Secret to Beats' & Apple's Success — Now He's Redefining AI for the Physical World

Related

6

Playbook for Knowledge Workers to Survive the AI Jobpocalypse

The Browser Is the New Battleground: How AI Is Moving Out of Chat and Into Your Life

Context Models Are the Unlock to Consistent AI Value

What UX Research Looks Like When Context Becomes the Engine

Team Work Is About to Transform and Atlassian Is Leading the Charge

The Cognitive Shift Every UX Researcher Needs to Make

Product Impact Newsletter

AI product strategy delivered weekly. Free.